We have an entire series out already for what training your team needs, for every possible role. Check it out here. And while AI adoption and building AI literacy comes with unique challenges for every team, there are some fairly unique challenges with customer-facing teams.

From sales professionals to account managers to customer support agents — they face a second, slightly different risk that nobody else really has to deal with. What is that exactly? AI is being used against many of the people they serve. AI-generated phishing emails now achieve click-through rates more than four times higher than human-crafted ones. A single deepfake video call cost engineering firm Arup ~$25 million. And according to the World Economic Forum's Global Cybersecurity Outlook 2026, 73% of organisations were directly affected by cyber-enabled fraud in 2025.

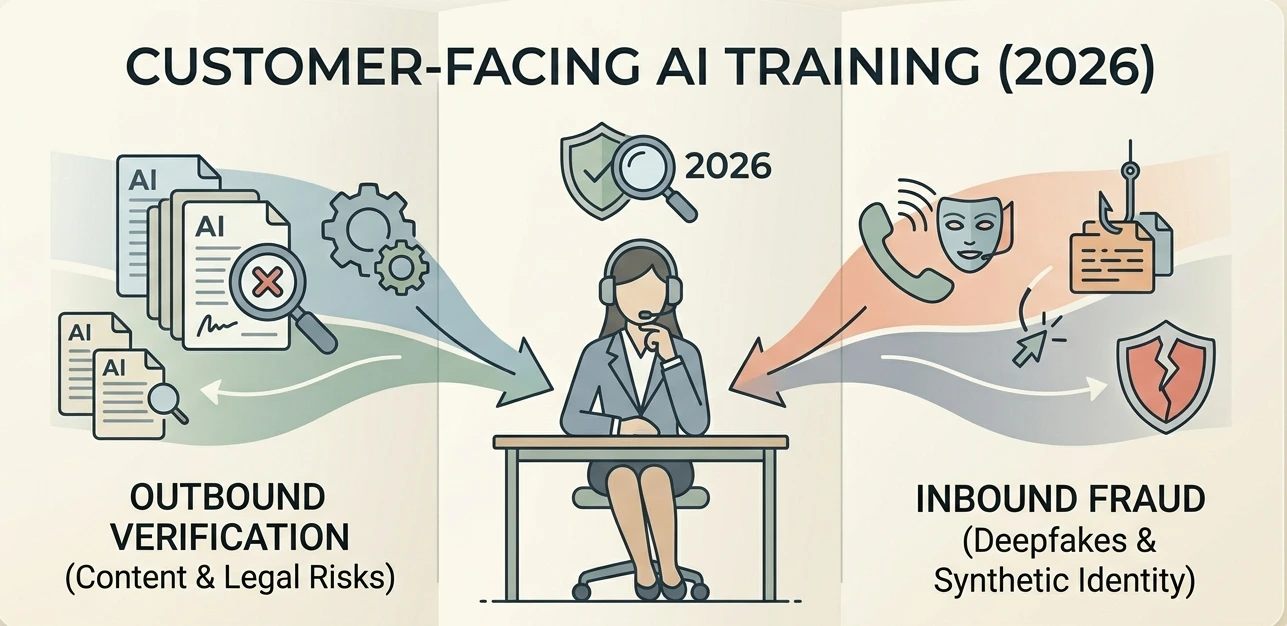

In a way, customer-facing teams are at the intersection of both risk directions. They're producing AI-assisted communications that carry legal and reputational exposure, and interacting daily with customers who may be targets of — or perpetrators of — AI-enabled fraud. Neither risk is covered by generic AI awareness training.

So let's break down what type of training would best help them.

The Dual Risk Structure: Why This Group Is Different

Most AI training focuses exclusively on outbound risk: employees using AI tools to produce outputs that might be wrong. For customer-facing teams, that's only half the problem — and the training design has to reflect that.

Training that addresses only the outbound risk leaves teams half-prepared for the environment they're actually operating in.

Sales Teams: Outbound Risk

Sales is the function where AI hallucinations most directly create contractual liability. The mechanism is specific: statements in proposals, emails, demo scripts, security questionnaires, and statements of work can influence interpretation of the final agreement. If a buyer can show those representations induced the contract, the seller may face claims even if the hallucination originated in a software tool.

The tools driving this are the AI features sales teams use most heavily. Gong's AI deal summaries and call analysis, Clari's revenue intelligence forecasts, Outreach's AI-generated email sequences, and People.ai's pipeline analysis all produce outputs that sales professionals act on and often share outward. Among enterprise sales teams using AI for deal analysis, 23% of late-stage deal losses have been traced to a qualification element that AI incorrectly identified as confirmed. The hallucination cost is invisible in pipeline dashboards and surfaces as closed-lost with whatever reason the rep attributed.

Three specific sales risks need to be in training. First, AI-generated proposal content that makes unverifiable product claims — a particular risk for teams using Seismic AI or Highspot's AI content generation for proposal assembly. Second, AI deal summaries from Gong or Clari that misrepresent buyer commitments or qualification data. Third, AI-generated security questionnaire responses that assert certifications the product does not hold.

A sales professional receives an AI-generated proposal section covering three product capability claims, a customer reference statistic, and a security certification. They must classify each as: can be used as-is, requires verification before use, or creates potential legal exposure without independent sourcing. They then write the specific verification step required for each flagged item.

Customer Service Teams: Outbound Risk

Customer service is where AI hallucination reaches end customers most directly and at the highest volume — through both the tools agents use to draft responses and the AI systems that operate semi-autonomously in the same interactions.

AI hallucinations in customer service lead to an approximately 18% increase in escalation rates and contribute to around 30% of AI-related reputational incidents. For teams using tools like Kustomer's AI response generation, Gladly's AI-assisted agent platform, or Tidio's automated response features, the failure mode is a confident wrong answer delivered at volume to customers who act on it.

In 2025, a major US retailer's AI-assisted returns tool gave thousands of customers incorrect information about return window eligibility following a policy change — the model hadn't been updated. The organisation processed the returns to avoid reputational damage, at a cost that significantly exceeded what a human review step would have cost. The model wasn't wrong randomly. It was systematically wrong in a single direction, and nobody had a process for catching that before it reached customers.

Three specific risks need training. AI-suggested responses that contradict current policy — a live problem whenever pricing, eligibility, or terms change. AI-generated case summaries that omit material information the next agent needs. AI tools that provide confident answers about product features or eligibility that haven't been verified against live data. Does your team have a clear protocol for any of these?

A customer service agent receives three AI-suggested responses to customer queries. The first is accurate and appropriate to send. The second is tonally correct but contains a policy detail that's outdated. The third invents a resolution pathway that doesn't exist. The agent must identify which is which, explain how they'd verify the second, and describe what they'd send instead of the third.

Account Management Teams: Outbound Risk

AI tools are now generating or summarising the client records, commitments, and relationship history that account managers stake their credibility on. An AI meeting summary that misattributes a commitment, an AI-generated account health report that misrepresents usage data, an AI-drafted renewal proposal that references contract terms incorrectly — all of these damage client trust in ways that are harder to recover from than a product failure.

The tools creating this risk are the ones account teams rely on most heavily. Gainsight's AI-generated customer health scores and success plan summaries, ChurnZero's automated engagement analysis, and Totango's AI journey insights are all producing outputs that feed directly into client conversations and renewal decisions. A 2026 UC San Diego study found AI-generated summaries hallucinated 60% of the time, influencing purchase decisions. For account managers whose entire value rests on being trusted custodians of the client relationship, an AI error that reaches the client undermines the relationship in ways that a product failure often does not.

What makes these errors dangerous is that they're plausible. An account health score attributing usage data from one client to another doesn't look wrong at a glance, especially when the accounts are similar in size and sector. The failure mode isn't random error. It's confident misattribution at scale.

An account manager receives an AI-generated quarterly business review document for a client. It contains five specific claims about the client's usage, ROI achieved, and upcoming contract terms. Two are accurate. Two are misattributed from a different client's data. One is hallucinated entirely. The manager must identify each category and describe the client conversation they'd need to have if they'd already sent the incorrect version.

The Inbound Risk: AI-Enabled Fraud

Almost no existing AI training programme covers this. Customer-facing employees interact every day with people who may be using AI to deceive them or the organisation.

Three threat types, each requiring a different detection approach:

In early 2024, an employee at engineering firm Arup was deceived into transferring ~$25 million after a deepfake video call in which multiple colleagues — including what appeared to be the CFO — instructed the transfer. Every participant in the call except the target was AI-generated. The tell was not in the video quality. It was in the request itself — a large, urgent, out-of-process transfer authorised through a single channel with no out-of-band verification. That's the training gap the inbound risk module addresses.

Three scenarios. An urgent call from what appears to be a known client requesting a change to payment details. An onboarding application with documentation that passes visual inspection but contains inconsistencies a fraud tool like Sardine would flag. An internal email requesting urgent wire transfer approval that references recent board discussions. The employee must identify the red flags in each scenario, describe the verification protocol they'd follow before taking any action, and explain why the urgency framing in each is itself a warning signal.

Four Skills That Apply Across All Customer-Facing Roles

Four skills apply across sales, service, and account management — and almost none of them appear in standard AI awareness training.

Programme Design and Time Investment

Five modules covering both outbound and inbound risk. The sequencing is deliberate: outbound role-specific modules first, then inbound fraud, then the cross-cutting verification skills that connect both. Module four is the highest priority for immediate deployment — voice cloning attempts increased 350% year-on-year and almost no organisation has inbound fraud training in place.

| Session | Coverage | Format | Time |

|---|---|---|---|

| One | Sales outbound: claim verification | Proposal classification exercise | 75 minutes |

| Two | Customer service outbound: response review | Three-scenario classification exercise | 60 minutes |

| Three | Account management outbound: document review | QBR analysis exercise | 75 minutes |

| Four | Inbound fraud: impersonation and social engineering | Three-scenario red flag exercise | 90 minutes |

| Five | Cross-cutting skills: verification protocols | Verification protocol workshop | 60 minutes |

Approximately 6 hours across five sessions. Most organisations have some form of output verification guidance for sales and service already, even if it's not well trained. Almost none have anything that prepares customer-facing employees for AI-enabled inbound fraud. Start with module four if you're deploying under time constraints. Then work backwards.

In client proposals, service interactions, and incoming fraud attempts. Savia's role-specific AI learning paths include customer-facing content built around the actual tools, scenarios, and threat types these teams encounter daily.