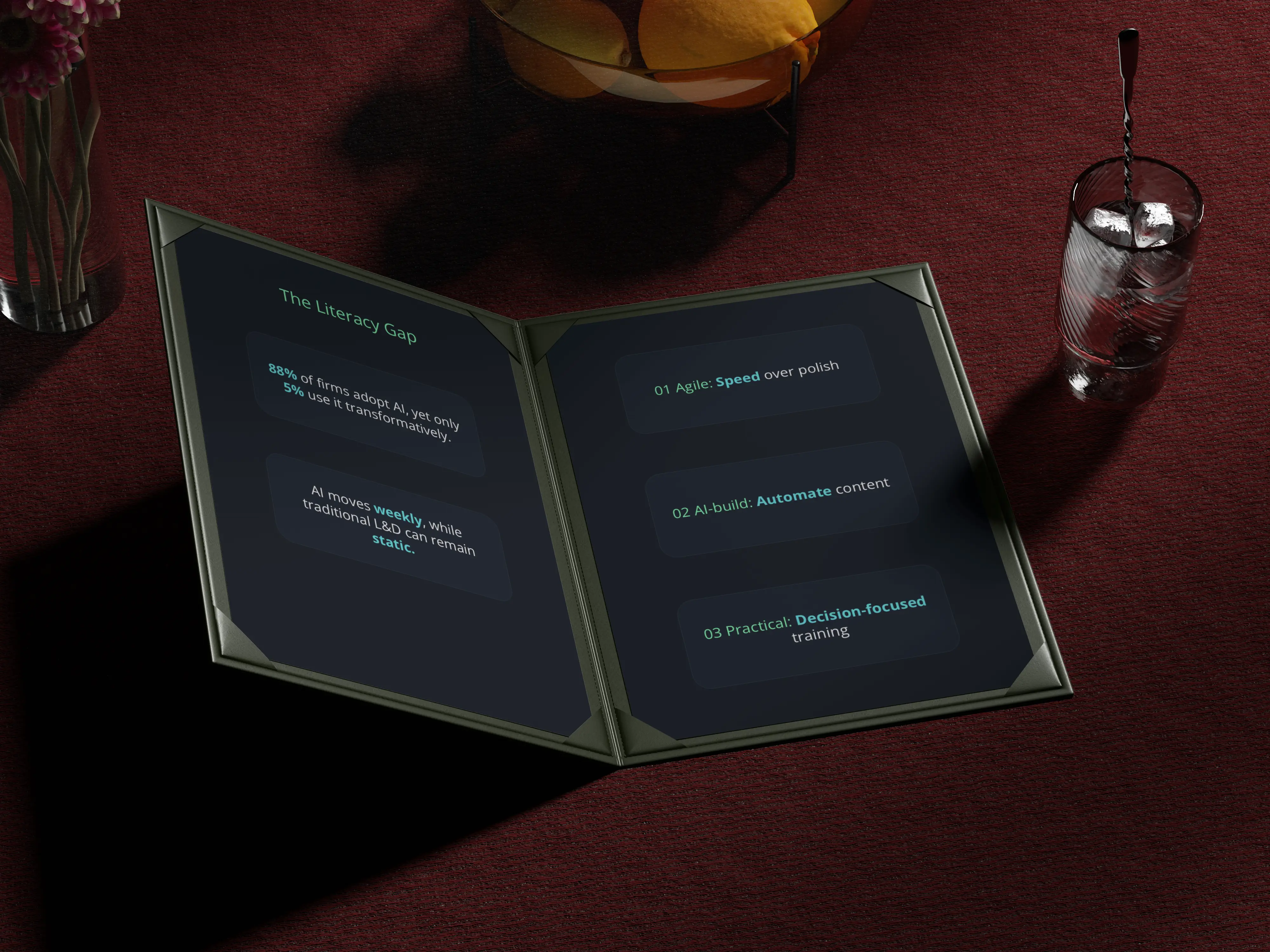

88% of organisations now use AI in at least one business function. Only 5% of employees use it in ways that actually transform their work. The rest are calling in Seal Team 6 to rescue a cat stuck in a tree.

The gap is not about access to tools. It is about training — and specifically, about whether the training approach being used was designed for a world where the technology moves as fast as AI does.

The Traditional L&D Approach — and Why It Still Matters

It is genuinely easy to build a training program. The traditional Learning & Development approach is straightforward and well-established: assess the current qualifications of your employees, define the desired state you want them to reach, identify the competency gap between the two, and then design the appropriate training blend to close it. Assessment. Gap analysis. Curriculum. Delivery.

The result, in theory, is a fully trained workforce — capable not only of using the tools in front of them but of understanding the limitations, the risks, and the ethical dilemmas that come with them. Clean. Logical. Measurable.

Here is the important thing to say about this model: it is not wrong. The underlying principles — understanding where your employees are, defining where you need them to be, and building toward that — are still the right way to think about workforce development. The competency gap framework remains the most useful conceptual tool available for this kind of work.

What has changed, fundamentally, is the process and the thinking required to apply it to AI literacy. And that change is significant enough to warrant a serious rethink of how these programs are built and maintained.

The Problem With AI Literacy Is That It Doesn't Stand Still

Consider a concrete example. What your employees could meaningfully do with Claude Sonnet 4.5 may be quite different from what they can do with Sonnet 4.6. That is one minor model iteration — and already the relevant skills, limitations, and best practices have shifted. Now consider the challenge of explaining the different context window limits across GPT-4o, GPT-4.5, and Claude Sonnet 4.6 in a single training module that is supposed to remain accurate for more than a few weeks.

This is not because the underlying theory is complicated. It is not. Unlike GDPR or the EU AI Act — frameworks that are dense with legal language but essentially static once enacted — AI capabilities evolve on an almost weekly basis. GDPR, for all its length and complexity, is largely a fixed reference point. Courts may interpret it differently over time. The European Commission may issue new guidance. But the text itself does not change between quarters.

AI literacy is different in kind, not just in degree. It is a continuously evolving skillset, where the technology your employees are being trained to use may look meaningfully different by the time the training has been designed, reviewed, approved, and deployed. That is a structural problem for traditional L&D timelines — and it is one that most organisations have not yet fully confronted.

This process works well — and for many training programs, it remains exactly the right approach. Deep-dive onboardings, compliance foundations, role-specific skill development: these benefit from the rigour that a full storyboard-to-build cycle provides. The process is not the problem.

For AI literacy specifically, the challenge is that steps 1 through 5 can take longer than the technology remains stable. A course that accurately describes the capabilities and limitations of a given model in month one may be quietly misleading by month four. That is not a failure of instructional design. It is a structural mismatch between production timelines and the pace of AI development — and it requires deliberate adaptation, not just better processes.

That gap — between 88% adoption and 5% meaningful use — is not explained by access. It is not explained by willingness. It is explained by training. Or rather, by the absence of it. And it points to a workforce that has been given tools without the foundation to use them well. For more on what that foundation looks like at the individual level, it is worth reading our piece on what AI literacy actually means — and why using a tool is not the same as understanding it.

Building a Program That Keeps Pace

So what does a practical, sustainable AI literacy program actually look like? The answer is not to abandon the principles of good instructional design. It is to apply them differently — with speed, iteration, and practical relevance as the primary design constraints, rather than comprehensiveness and visual polish.

Where to Start — Practically

The first step is still the one traditional L&D always recommends: understand where your employees actually are. Not where you hope they are, and not where the most optimistic interpretation of your last training completion report suggests they might be. Where they actually are. A short skills diagnostic — even an informal one — will tell you more than any assumption.

From there, the goal is not to build a program that covers everything. It is to build a program that covers the right things for the right roles, and that is designed from the outset to be updated. AI literacy is not a destination. It is an ongoing discipline — one that, like the technology itself, will need to keep moving.

Think about what your existing L&D team can genuinely deliver given their current bandwidth and constraints. Put them in the best position to do that well — with the right tools, the right briefs, and a realistic production cadence. If you have gaps, or if you do not yet have an L&D function, there is no shame in looking for the right outside support rather than trying to build everything from scratch under time pressure.

If you need outside help, or if your L&D team needs a strong foundation to build from, take a look at our AI literacy training content. If we don't have exactly what you're looking for yet, we have the expertise to build and adapt it for your organisation.

Explore AI Literacy Training →