GDPR came into force in May 2018. Most organisations provided training that year, and many haven't revisited it in any meaningful way since. The assumption is that the initial compliance effort was sufficient and that periodic reminders keep the obligation met. The enforcement data doesn't support that assumption.

Cumulative GDPR fines now exceed €7.1 billion. More than 2,800 fines have been issued in total, and over 60% of that total has landed since January 2023. In 2025 alone, €1.2 billion in penalties were issued. European data protection authorities now receive 443 breach notifications per day — a 22% year-on-year increase and the first time daily reports have exceeded 400 since the regulation came into force.

The organisations paying those fines include household names with dedicated compliance teams and substantial legal budgets. The violations driving them are not obscure edge cases. They're recurring patterns — in consent, data transfers, subject access, and the handling of AI-generated data — that employee training should be preventing and, in most organisations, is not. This article covers what has changed since 2018, what enforcement is now focused on, and what employees are still consistently getting wrong. Take a look at GRC and the training gap for the broader compliance context.

Section 01

What Has Actually Changed Since 2018

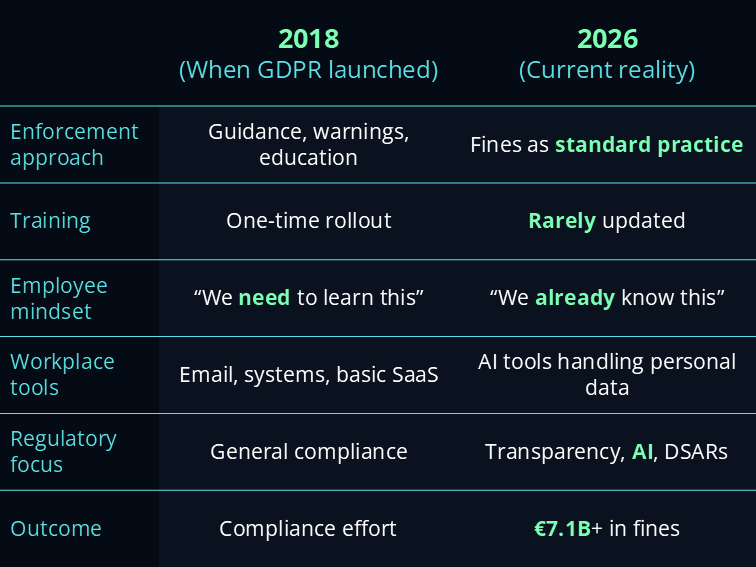

Most employees who received GDPR training in 2018 received it once. The regulation they were trained on is not the same regulatory environment they're operating in today. Four things have materially changed.

The early years of GDPR saw significant use of warnings, reprimands, and correction orders.

That phase is over. Supervisory authorities across the EU now impose fines as a matter of course for substantive violations, and the amounts are calibrated to be genuinely deterrent.

The 2025 to 2026 enforcement wave confirms that regulators have moved from guidance to consequences. Employees and managers who treat GDPR as a box-ticking exercise are operating with an eight-year-old mental model of how seriously it is enforced.

Each year, the European Data Protection Board selects a theme for coordinated enforcement action across all member state supervisory authorities. After the right to erasure in 2025,

the EDPB announced that the 2026 coordinated enforcement action concerns compliance with the transparency and information obligations set out in

Articles 12 to 14 — the provisions ensuring data subjects are informed when their data is processed. Every organisation that processes personal data should be reviewing its privacy notices, layered information structures, and data subject communication practices now, not after the first audit letter arrives.

AI tools are processing personal data in ways that 2018 training programmes could not have anticipated. The number of grievances, whistleblowing complaints, and Employment Tribunal claims involving AI-generated content is rising sharply. AI tools used to summarise grievance meetings or analyse sentiment in employee communications may produce outputs that

constitute personal data and fall within the scope of a subject access request. Most employees using these tools have no idea this is the case. The AI Act overlay on GDPR obligations is covered in

what the EU AI Act means.

The European Commission's Digital Omnibus package, proposed in November 2025, includes targeted amendments to GDPR: the end of technology-neutral data protection law as AI is explicitly embedded in the framework, and a narrowing definition of personal data informed by recent CJEU rulings.

These proposals are pending and are not yet law.

Until they are adopted, GDPR applies in its current form, in full.

Section 02

The Five Violations Employees Consistently Get Wrong

The enforcement data reveals clear patterns. These are not sophisticated technical failures. They're recurring gaps between what employees believe they are doing and what GDPR actually requires.

Employees across most organisations default to consent when asked about the legal basis for processing personal data. In most workplace contexts, consent is the wrong basis. And using it incorrectly creates compounding problems, because consent must be freely given, specific, informed, and withdrawable.

LinkedIn Ireland was fined €310 million for using an incorrect legal basis for advertising and analytics, claiming processing was necessary to perform a contract with users — a basis that didn't withstand regulatory scrutiny. For most internal HR and employee data processing, the correct bases are legitimate interest or legal obligation. Consent is not appropriate in an employment relationship because the power imbalance means it cannot be genuinely freely given.

Training implication

Employees need to identify the correct lawful basis for the specific data processing they perform in their role. Stating "we have consent" as a generic answer is not adequate and creates active compliance exposure.

The UK ICO reports that human error causes 90% of breaches — not malicious attacks, just people making mistakes because they're rushed, confused, or don't know what the rules require. GDPR does not care whether a breach was intentional. An email sent to the wrong recipient containing personal data still triggers reporting obligations.

Most employees don't know that a misdirected email is a reportable breach. They don't know the 72-hour notification window to the supervisory authority. They don't know that failing to report, rather than the breach itself, often results in the most significant regulatory action. Human error factors in 95% of data breaches, making employee awareness of what constitutes a reportable incident the most directly preventable risk category in GDPR compliance.

Training implication

Employees need a clear, practised mental model for what constitutes a reportable breach and a known, tested escalation pathway. The culture around reporting matters as much as knowledge of the obligation.

This is the gap that 2018 training cannot have anticipated and that most programmes have not yet updated to address. Employees routinely paste customer names, employee records, client data, and internal documents into AI tools without understanding that doing so constitutes a data transfer that must comply with GDPR.

Consumer tools, including AI assistants operating on public infrastructure, lack proper logging controls. There's no data processing agreement in place, no audit trail, no control over where the data goes or how it's used by the model provider. The same problem applies to workplace messaging apps used informally: data shared through unapproved channels leaves the organisation's control and creates accountability gaps that are very difficult to close retroactively.

Training implication

Employees need specific, practical guidance on which AI tools are approved for use with personal data, what categories of data must never enter unapproved tools, and what to do when they're unsure. This is not a topic a 2018-era awareness module covers.

Data subject access requests have become, in the words of employment lawyers with direct experience of this, the first move in almost every employment dispute before anyone has set foot near a tribunal. In 2024 to 2025, the ICO received nearly 43,000 complaints from individuals about how their personal data is handled, the majority relating to the right of access. Every DSAR should be treated, from receipt, as a potential litigation document.

Most employees who receive or process a DSAR don't know the one-month response deadline. They don't know what the response must contain. They don't know that AI-generated outputs about a person are in scope. And managers who delete emails before responding to a DSAR have committed a secondary violation — often a more serious one than the original issue the DSAR was exploring.

Training implication

Every manager and anyone in HR, legal, or customer-facing roles needs specific training on DSAR receipt, processing, and response. Generic awareness that the right of access exists is not sufficient.

A Polish DPA fine of approximately €4 million was issued after a processor's misconfigured server exposed sensitive employee data of thousands of workers. The authority explicitly cited insufficient ongoing monitoring and verification of the processor's technical and organisational measures — because controllers are held liable when a processor fails.

Employees who share data with vendors, use third-party platforms, or onboard new tools routinely do so without understanding that the controller remains accountable for how that processor handles the data. A signed data processing agreement is necessary but not sufficient. Sharing sensitive health data with subcontractors without valid contracts was one of the primary violations in 2025 enforcement cases. Sign data processing agreements with all vendors, and maintain active visibility into the processing chain rather than treating the DPA as a one-time document.

Training implication

Employees responsible for vendor selection, procurement, and tool onboarding need specific training on what a DPA must contain, how to evaluate a processor's technical and organisational measures, and what ongoing monitoring means in practice for their role.

Section 03

The Enforcement Categories Driving the Biggest Fines

Understanding where regulators are focusing attention helps organisations prioritise their training gaps. Three enforcement categories dominate the 2025 to 2026 picture.

€1.2B

GDPR enforcement — 2025

in penalties issued in 2025 alone. Over 60% of cumulative fines have landed since January 2023.

€1.2B

Meta — cross-border transfer fine

Meta's fine for unlawful EU-US data transfers. TikTok received €530M in a separate transfer case in 2025.

443

Kiteworks — breach notifications, 2026

breach notifications received per day by European DPAs — a 22% year-on-year increase.

Cross-border data transfers

Cross-border transfer violations continue to drive the highest individual penalties. The €1.2 billion Meta fine and the €530 million TikTok penalty are both transfer cases.

Standard contractual clauses alone are not sufficient. Organisations must conduct transfer impact assessments, apply technical safeguards, and maintain continuous oversight.

Employees in international sharing, cloud platform selection, or vendor contracts involving non-EU processing must understand this obligation at a practical level, not just as a legal concept.

Consent and cookie practices

The CNIL and other supervisory authorities now actively test websites rather than waiting for complaints. Making cookie rejection harder than acceptance is a violation. Placing cookies before consent is obtained is a per-session violation. Pre-ticked boxes do not constitute valid consent.

These are not new rules. They are 2018 rules that are now being

systematically enforced. Organisations that haven't audited their consent mechanisms since implementation are operating on borrowed time.

Transparency obligations in 2026

The EDPB's 2026 coordinated action concerns compliance with Articles 12 to 14 — privacy notices, layered information architectures, and the clarity of communications to data subjects.

This is the stated priority of the body coordinating European data protection authorities.

This is not a background risk. It is the coordinated focus of every member state supervisory authority this year.

Section 04

What Good GDPR Training Looks Like in 2026

The compliance failure isn't usually that organisations have done nothing. It's that they've provided training that was adequate in 2018 and haven't updated it to reflect how people actually work today.

Role-specific, not universal. A customer service agent's GDPR exposure is different from a procurement manager's, which is different again from an HR professional's or a developer's. A single annual module covering all of them equally produces awareness without capability. It produces the specific kind of false confidence that makes employees believe they already understand something they don't.

Scenario-based, not principles-based. Knowing that GDPR requires a lawful basis for processing doesn't tell an employee what to do when a client sends an unencrypted spreadsheet of customer data, or when a colleague asks them to forward an internal investigation report. Real-work scenarios produce behaviour change. Abstract principle statements produce quiz pass rates.

AI-aware. Employees using AI assistants, summarisation tools, and automated workflows are creating GDPR exposure their 2018 training didn't cover. Regular, role-specific training that reflects the actual tools and contexts employees encounter in 2026 is the only training that addresses the actual risk. The programme design principles that apply to GDPR training are the same as those covered in building an AI literacy programme.

The Design Test

If your GDPR training has not been updated since 2018, it doesn't cover: AI tools as vectors for personal data transfer, the current enforcement posture of supervisory authorities, the 2026 coordinated focus on transparency obligations, or the DSAR risk landscape in employment disputes. It is not 2026 training. It is 2018 training being delivered in 2026.

GDPR Training Readiness — Questions to Ask

Has training been updated since 2018 to cover AI tools, current enforcement posture, and the 2026 transparency focus?

Is training role-specific — does HR get different content from procurement, customer service, and development teams?

Do employees know the 72-hour breach notification window and what constitutes a reportable incident in their daily work?

Do employees who use AI tools understand which are approved for use with personal data and which are not?

Does anyone in HR, legal, or customer-facing roles have specific DSAR receipt and response training — not just awareness of the right of access?

Do employees involved in vendor selection and procurement know what a DPA must contain and what ongoing processor monitoring requires?

Frequently Asked Questions

GDPR 2026 — Common Questions

Answers to the questions compliance leads, HR teams, and L&D professionals most commonly ask when reviewing GDPR training for 2026.

What has changed about GDPR since 2018?

Four things have materially changed. Enforcement has shifted from education to punishment — the phase of warnings and correction orders is over. The EDPB's 2026 coordinated enforcement focus is transparency obligations under Articles 12 to 14. The intersection with AI has created new exposure: AI-generated outputs may constitute personal data in scope of subject access requests. And the GDPR Omnibus proposals are in progress but not yet law. GDPR applies in full until they are adopted.

What are the most common GDPR violations employees make?

Five recurring violations dominate the enforcement data. Treating consent as the default lawful basis when most workplace contexts require legitimate interest or legal obligation. Not recognising a reportable breach — most employees don't know the 72-hour notification window. Using personal AI tools with personal data without data processing agreements in place. Mishandling DSARs — not knowing the one-month deadline or that AI-generated outputs are in scope. And assuming third-party processors bear the compliance responsibility when controllers remain accountable for how processors handle data.

What is the EDPB's 2026 enforcement focus?

The EDPB announced that the 2026 coordinated enforcement action concerns compliance with the transparency and information obligations set out in Articles 12 to 14 — the provisions ensuring data subjects are informed when their data is processed. This follows coordinated action on the right to erasure in 2025. Every organisation processing personal data should be reviewing its privacy notices and data subject communications now, not after the first audit letter arrives.

How does GDPR apply to AI tools employees are using?

Pasting personal data into consumer AI tools constitutes a data transfer that must comply with GDPR. Consumer AI tools lack data processing agreements, audit trails, and control over where data goes. AI-generated outputs about a person — meeting summaries, sentiment analyses, grievance notes — may constitute personal data and fall within the scope of a DSAR.

Most employees using these tools have no idea this is the case, and 2018-era training cannot have addressed it. See

what the EU AI Act means for the regulatory overlay.

What does good GDPR training look like in 2026?

Role-specific rather than universal — a procurement manager's exposure differs fundamentally from a customer service agent's. Scenario-based rather than principles-based — scenarios that mirror real work produce behaviour change, abstract principles produce quiz pass rates. And explicitly updated to cover AI tools and current enforcement priorities.

If your training hasn't been updated since 2018, it is not 2026 training. The same design principles are covered in

how to build an AI literacy programme for your team.

GDPR compliance is not a one-time event,

and 2018 training is not 2026 training.

Savia's GRC learning paths include role-specific data protection content built around the violations employees actually cause — updated for the current enforcement environment and the AI-era workplace your teams are operating in.