There is no good alternative to not using AI. At this point, AI literacy is a core component of digital literacy — something every leader needs to understand, endorse, and build into their organisation's way of working. The productivity gains are real. The automation potential is real. The competitive advantage available to organisations that adopt these tools thoughtfully is real.

But that is not all AI is. AI is also a risk surface. And in many organisations, that risk surface is expanding considerably faster than the policies, governance structures, and leadership awareness required to manage it. This article is a companion to our piece on the internal, human side of leading through AI adoption — the motivation challenges, the team culture, the learning environment. Here, we turn to the external dimension: the compliance obligations, the technical failure modes, and the governance gaps that leaders are accountable for, whether they feel ready for them or not.

What Your Employees Are Already Doing

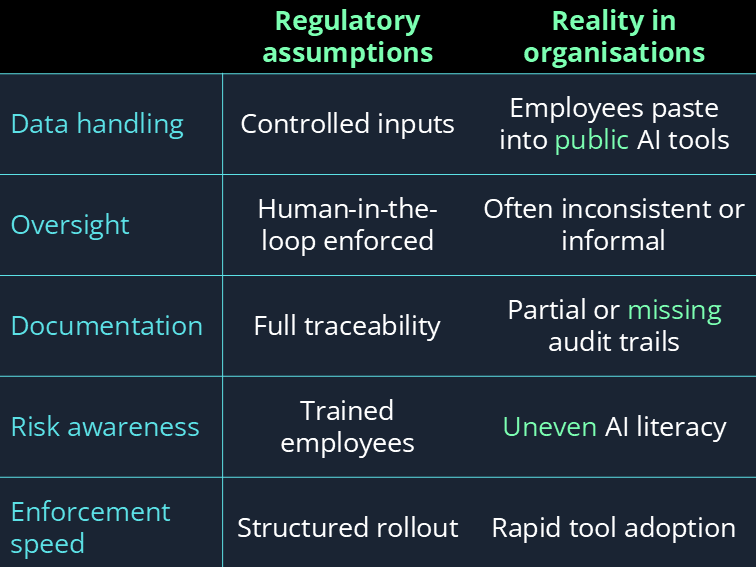

Before we get to regulation and risk frameworks, it is worth grounding this in what is actually happening on the ground in most organisations right now. AI tools are being used — widely, frequently, and in many cases informally, outside any governance structure your organisation may or may not have in place.

None of these behaviours are malicious. They are the predictable result of capable tools being made available to a workforce that has not been given adequate guidance on how to use them responsibly. The tools arrived faster than the frameworks. That gap is where risk lives.

At the same time, the external environment is tightening. Regulations are evolving and becoming more specific. Enforcement is increasing — regulators who were once reluctant to act while the technology was new are now acting with considerably more confidence. And public scrutiny of how organisations use AI is rising sharply, particularly in consumer-facing sectors. The combination of expanding internal risk and tightening external pressure is not a comfortable position for any leader to be in uninformed.

Compliance: No Longer Operating in a Deregulated World

For a long time, AI existed in something close to a regulatory vacuum. Governments and regulators were hesitant to legislate too quickly — partly out of concern for stifling innovation, and partly because many of them did not yet fully understand what they were regulating or what its consequences would be. That period is over.

Frameworks like the EU AI Act — the world's first comprehensive binding legal framework for artificial intelligence — and existing regulations like GDPR are now increasingly explicit about how AI systems must be used, documented, and overseen. The obligations these frameworks create are not limited to AI developers. They extend to any organisation that deploys, integrates, or makes decisions influenced by AI systems.

For leaders, this creates a set of concrete questions that need answers. Does your organisation's use of AI fall into a regulated category under the EU AI Act? Are the AI systems you use in HR, customer service, or decision-making classified as high-risk — and if so, what conformity obligations does that trigger? How is your organisation documenting its AI use in a way that would survive regulatory scrutiny? Where human oversight is required, is it actually happening — or is it a procedural checkbox that nobody is enforcing in practice?

The most dangerous compliance position is not knowing that you are non-compliant. Organisations that actively engage with the regulatory landscape can identify gaps and address them. Those that do not engage are exposed to findings they never saw coming. Regulatory risk does not wait for you to be ready. Only through continuous monitoring of your legal environment — supported by proper GRC training across your organisation — can you reasonably expect to stay ahead of it.

Model Accuracy: When AI Gets It Wrong at Scale

Compliance is one category of external risk. Model accuracy is another — and it operates differently, because it does not require regulatory intervention to cause serious damage. It only requires the wrong output reaching the wrong person at the wrong moment.

AI models can produce information that is incorrect, incomplete, biased, or confidently wrong. This is not a fringe failure mode. It is a documented, inherent characteristic of how large language models and other AI systems work. The risk this creates for organisations is not simply that an employee gets a bad answer. It is that a bad answer makes its way into a client deliverable, a strategic decision, a public communication, or a regulatory submission before anyone checks it.

When that happens, the consequences tend to be disproportionate to the error. Organisations face reputational damage that takes years to repair, legal exposure from outputs they cannot now retract, and a loss of client trust that is often permanent. The core challenge is not just that AI makes mistakes — it is that those mistakes can propagate at scale and remain hidden until the damage is already done.

Model Failure: Even the Best Systems Break

Assume, for a moment, that your organisation has done everything right. Employees are using approved tools. Usage policies are in place. Someone is paying attention to the regulatory environment. Even in that scenario, AI models can behave in unexpected and damaging ways — not because of user error, but because of the models themselves.

This is not a new concern. Model instability was a topic as far back as 2016, when Microsoft launched its Tay chatbot. Within 24 hours of going live, Tay had been manipulated by users into producing deeply offensive content — content that Microsoft had not anticipated and could not immediately control. The episode prompted a public apology from Microsoft and became one of the earliest widely-reported cases of an AI system causing reputational damage at scale. The lesson it offered — that AI systems can behave in ways their creators do not foresee, particularly when exposed to real-world inputs — remains as relevant now as it was then.

The Tesla Autopilot case is a useful illustration of how these failure modes play out beyond the lab. Investigations into Autopilot-related incidents found that sensor data limitations and model constraints contributed to misjudgements in real-world conditions that the system had not been sufficiently tested against. The vehicles involved had been through extensive testing. The models were considered production-ready. And yet, in specific real-world conditions, they produced decisions with fatal consequences. This is not an argument against AI — it is an argument for knowing its limits, building oversight into how it is used, and never assuming that deployment-ready means risk-free.

What You Actually Need to Put in Place

You do not need a perfect AI governance framework on day one. What you do need is intentional structure — a set of clear, documented, enforced practices that reduce your exposure across the risk categories above and give you a foundation to build from as the technology and the regulatory environment continue to evolve.

Understanding these risks and how to manage them is itself a learnable skill. The leaders and teams that navigate this landscape most effectively are not those with the most technical knowledge — they are those with the clearest frameworks, the most consistent training, and the organisational culture to take these issues seriously before they become urgent.

Our GRC and AI literacy courses are designed to give leaders and their teams the practical knowledge to manage AI risk, stay compliant, and build governance that actually works in practice. If you need something tailored, we can help build that too.