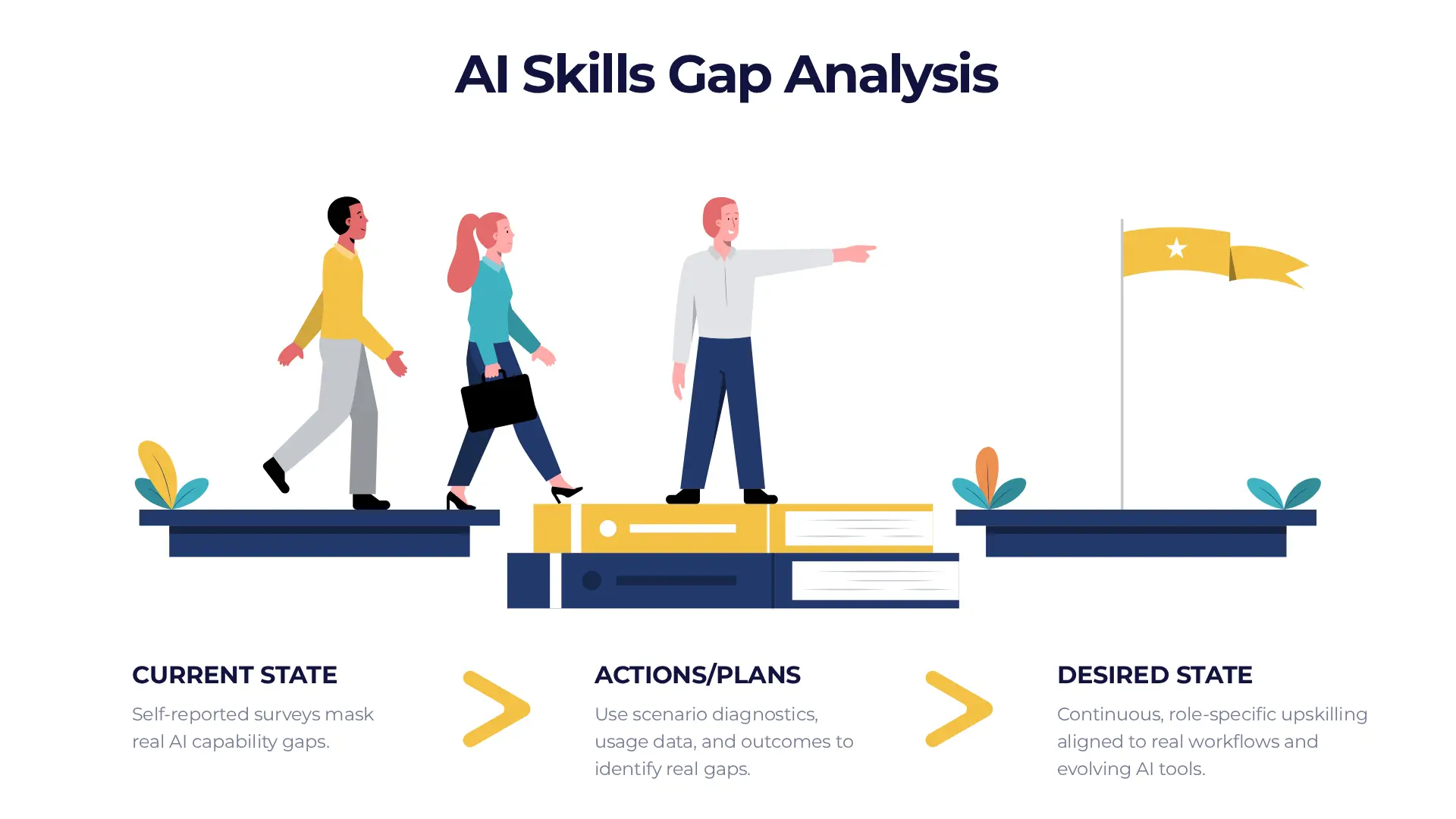

If your current strategy for assessing AI skills involves an annual survey asking employees how comfortable they feel with technology, you are not just behind — you are navigating 2026 with a map drawn in 1999. The survey will come back reassuring. The gaps it misses will not be.

AI learning gaps are different in character from most skill deficits an L&D team has to manage. They do not sit still. The technology evolves on a cycle that is considerably faster than any HR review process, which means that by the time a traditional competency assessment has identified a deficit, documented it, approved a training response, and scheduled the delivery, the specific capability being addressed may have already been superseded by a new model, a new tool, or a new failure mode your employees have not encountered yet. Speed is not the only problem. The nature of what you are measuring is.

We covered the broader challenge of building an AI literacy programme here. This article focuses on a more specific question: how do you actually find out where your team's gaps are before those gaps start costing you something?

Why Traditional Assessment Methods Stumble With AI

The standard approach to skills gap analysis works reasonably well for stable competencies. You interview top performers, survey the broader population, compare what the best people do against what everyone else does, and design training to close the distance. For something like project management methodology or financial reporting, this is a coherent process. For AI literacy, it has three structural problems.

Employees cannot report gaps in skills they do not know exist. If someone believes AI is essentially a faster version of a search engine, they will not flag a gap in prompt engineering or bias auditing — those capabilities are not part of their mental model of what AI involves. The survey returns positive results not because the team is competent but because the team does not yet know the right questions to ask about itself.

Interviewing your top ten percent of AI users tends to surface intuition, not a repeatable framework. The person who is most effective with AI tools usually cannot fully articulate why — they have developed a feel for the work that is genuinely hard to transfer through a competency description. Trying to codify what they do and scale it as a training programme often produces a document that sounds right and trains almost nobody.

Traditional competency mapping is designed for skills that remain reasonably stable once defined. GE's rigorous skills inventory system, celebrated in the late 1990s for its role in sustaining operational excellence, began to show its limits as the pace of digital change accelerated. By the time a competency framework was defined, validated, and distributed, the software version it described had already been updated. AI is that problem at significantly higher speed.

Scenario-based diagnostics, usage data, and outcome metrics tend to produce assessment results that are more specific, more actionable, and more honest about where people actually are. They also work regardless of which tool the employee happens to be using — which matters, because the answer needs to remain relevant even as the tooling changes.

Redesigning the questions is the first step. The second is broadening the methods. Stop asking about tools — "how well do you know ChatGPT?" — and start asking about outcomes. "How confident are you in verifying the factual accuracy of a machine-generated report?" surfaces a real and actionable gap where a tool-focused question would not.

Better Ways to Find Where the Gaps Actually Are

Redesigning the questions is the first step. The second is broadening the methods. There are approaches to AI skills assessment that surface what surveys cannot — and they tend to produce information that is more specific, more actionable, and more honest about where people actually are.

Skillsoft's shift toward AI skills development offers a useful illustration of what outcome-based assessment looks like when it is properly operationalised. Rather than beginning with a course and measuring completion, their AI-native skills platform leads with a diagnostic challenge that simulates a real-world AI failure — a hallucinated output, a biased result, a prompt that produced the wrong thing for a plausible reason. The employee's response to that challenge determines which training they receive and at what level. The gap is identified first. The learning is then built around it.

This is assessment-first learning — the inverse of the traditional model, which assumes a gap exists and delivers training regardless of where each individual actually sits. It is more efficient, more targeted, and considerably more honest about what each person needs. It also tends to produce higher engagement — employees who have just encountered a problem they could not solve are far more motivated to learn the solution than those sitting through a module on a topic they believe they already understand.

Just-in-Time Learning: Building the Bridge as You Cross It

Even the best diagnostic process has a lag. By the time a gap is identified, assessed, and addressed through a formal training programme, the window in which that specific gap was causing the most damage has often passed — or a new gap has opened elsewhere. The organisations that handle AI skills development most effectively have recognised that the production cycle for AI training needs to match the pace at which the gaps appear, not the pace at which traditional L&D programmes are built.

The answer is modular training: small, targeted learning interventions built quickly in response to specific, observed failures. Not a comprehensive course on AI fundamentals. A ninety-second guide on the specific error pattern your data team made last week during financial close. Not a semester-long curriculum on prompt engineering. A short module on the exact prompt structure that is producing inconsistent results in the tool your customer service team uses every day.

JPMorgan Chase's internal approach to AI development illustrates what a dynamic training model looks like at scale. Rather than operating a fixed AI curriculum, their Data & AI Academy updates its content on a rolling basis in response to internal usage data and emerging needs across the organisation. The firm's deployment of large language models across research and risk functions has been accompanied by training that evolves alongside the tools — when a new capability is deployed or a new failure mode is identified, the Academy builds a targeted module for the relevant teams within weeks.

The result is a training programme that keeps pace because it is built on what is actually happening inside the organisation, not on what someone predicted employees would need at the start of the year. For most organisations, this kind of responsiveness requires a deliberate shift in how L&D is resourced and positioned — less emphasis on producing polished long-form courses on a slow cycle, more on producing accurate, targeted content quickly.

The Real Cost of Getting This Wrong

An AI learning gap is a risk to your organisation's mean time to recovery. When an AI system produces an error — a hallucinated figure in a financial model, a biased recommendation in a customer-facing workflow, a misclassified input that propagates through an automated pipeline — the time it takes your team to identify it, understand it, and correct it is a direct function of their AI literacy. A team that has been properly trained catches the error in minutes. A team with significant gaps catches it in hours, or days, or after the damage has already scaled.

This is the framing that tends to land most effectively with leadership when making the case for investment in AI skills assessment. It is not an abstract capability-building exercise. It is a risk management calculation. What is the cost of a four-hour recovery on an error that a trained team would have caught in four minutes? Multiply that across the frequency with which AI errors occur in your workflows, across the number of teams using AI tools, and the number becomes concrete and compelling fairly quickly.

Most organisations that invest in AI tools do not invest in measuring whether their people can use those tools well. Seat licences get purchased. Onboarding sessions get scheduled. Completion rates get tracked. Then the assumption is made that the gap has been closed. Completion is not competency. An employee who has finished an AI onboarding module and an employee who can reliably identify a hallucinated output, verify a model's reasoning, and know when not to trust the result are not the same employee — and right now, most organisations have no reliable way of knowing which one they have.

None of this requires a complete overhaul of how your organisation approaches learning and development. It requires adjusting the unit of measure, broadening the methods, and building the infrastructure for faster, more targeted training responses. The principles are the same ones good L&D has always relied on. The pace and the specificity are different — and in the AI era, those two things are not minor adjustments. They are the whole game.

Our AI literacy courses are built around practical, scenario-based learning that surfaces real gaps rather than assumed ones. If you are starting from scratch or looking to benchmark where your team sits today, we can help you find out.