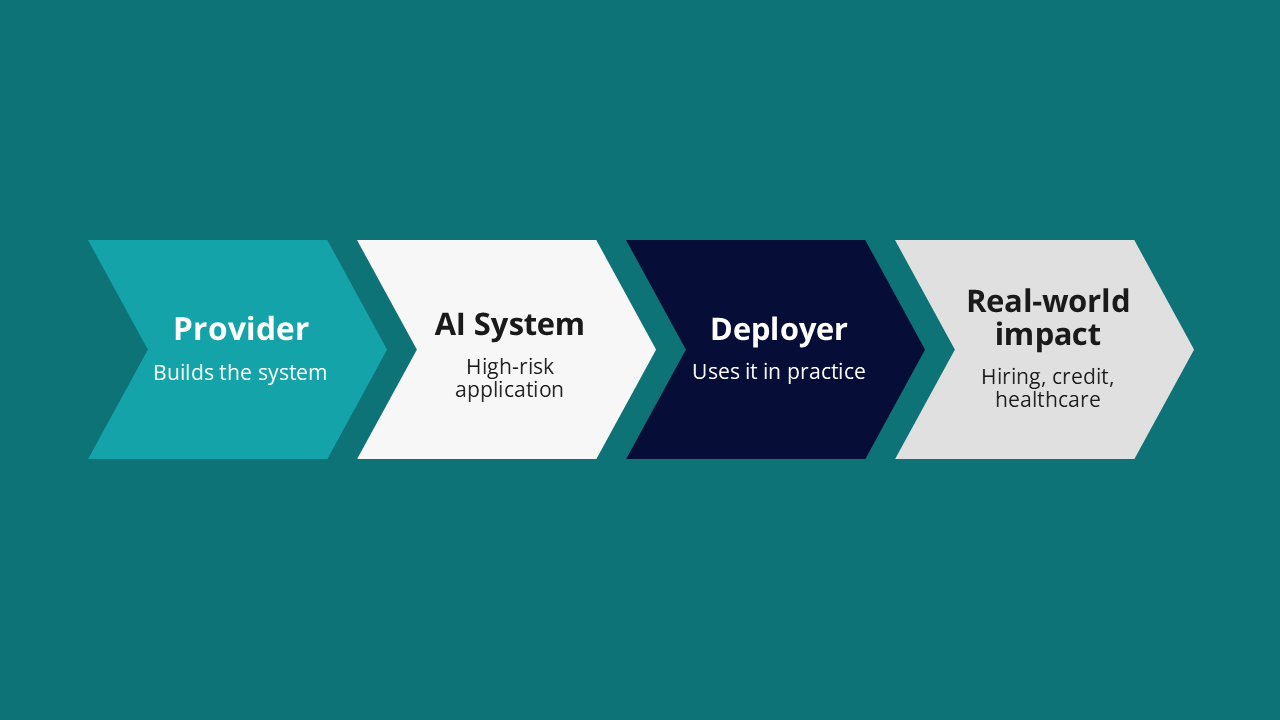

From August 2026, organisations using high-risk AI systems in the EU will face direct legal obligations under Article 26 of the EU AI Act. Now, what exactly does that mean? If your team is deploying AI in hiring, credit scoring, healthcare, or public services, compliance is no longer “the AI vendor’s problem.” Well, you are the deployer, and responsibility for how the system is used sits with you.

How will that look like day to day? In practice, it means you must use the system strictly within its intended purpose, assign meaningful human-in-the-loop oversight, monitor performance and report incidents, retain system logs for at least six months, and ensure workers and affected individuals are informed. In some cases, you will also need to complete a Fundamental Rights Impact Assessment before using a new AI system the first time. These are ongoing operational requirements you will need to manage in practice.

If that sounds like a lot, this guide will walk you through it. We’ll show where organisations typically fall short and what compliant operation actually looks like in practice. And if you’re on the provider side instead, see our guide to conformity assessment. For deployers, this is the part of the Act that applies to you.

Are You a Deployer? Establishing Your Position

The Act defines a deployer as any organisation using an AI system under its authority, except for purely personal use. In practice, that covers a wider range of organisations than most assume. A bank licensing a credit scoring model is a deployer. A hospital implementing a diagnostic tool from a vendor is a deployer. A public authority using AI in benefits or employment decisions is—unsurprisingly—also a deployer.

The test is rather simple: did someone else build the AI system, and are you the one running it? If yes, and the AI system falls within the high-risk categories of Annex III, you are a deployer with obligations under Article 26. The question is not who built the AI system. The question is who is putting the AI system into use.

One important thing to keep in mind here. If your organisation substantially modifies a third-party AI system and places it on the market under its own name, you move from deployer to provider. That brings a different and significantly heavier set of obligations, including conformity assessment. If you are adapting, fine-tuning, or repackaging a system for redistribution, it is worth getting clear on which side of that line you sit before August 2026.

For a practical breakdown of which systems qualify as high-risk under Annex III, see our guide to high-risk AI requirements, which maps the categories to real-world use cases across employment, credit, healthcare, and public services.

Obligation One: Use the System as Instructed

The first and foundational deployer obligation under Article 26 is straightforward in its text and consequential in its implications: you must use the high-risk AI system strictly in accordance with the provider's instructions for use. Not roughly. Not approximately. Strictly.

This matters for two reasons. First, using a system outside its intended purpose voids the compliance framework the provider has built around it. The conformity assessment, the CE marking, the technical documentation: all of that is scoped to a specific intended use. Step outside it and you step outside the legal protection it provides, with liability following you.

In 2026, a provider named TalentTech CE-marked an AI tool specifically for shortlisting candidates from a CV pool. When a client used it to make final hiring decisions and set salary levels, they exceeded the tool's intended use — absolving TalentTech of liability for any resulting errors. Deployers must implement strict organisational measures to ensure the AI stays within its certified scope. That means a designated lead who understands the tool's legal boundaries and ensures they are enforced operationally.

Second, this obligation creates a direct procurement dependency. If a provider's instructions for use are incomplete, vague, or missing entirely, your ability to comply is compromised through no fault of your own. Obtaining complete and usable instructions before deploying any high-risk system is a procurement obligation, not an afterthought. If you cannot answer, from the documentation you were given, what this system is for, what it is not for, and when a human must review its output: you are not ready to deploy it.

Obligation Two: Assign Real Human Oversight

Of all the deployer obligations, this is the one most organisations are least equipped to meet. Not because it is technically complex, but because it requires genuine capability rather than paperwork.

Article 26 requires you to assign human oversight to natural persons who have the necessary competence, training, and authority to perform it. The person you assign must be able to understand what the system is doing, detect when it is performing outside expected parameters, and take action, including overriding or suspending the system entirely, when something looks wrong.

The reference to Article 4 in this obligation is deliberate. Human oversight must be assigned to persons with the necessary competence under Article 4, training, and authority. Article 4 is the AI literacy requirement. The Act explicitly connects oversight capability to training. An organisation cannot claim compliant human oversight if the people responsible for it have received no meaningful training on the system they are supposed to oversee. Generic AI awareness sessions do not satisfy this. Role-specific capability does.

What that role-specific capability looks like in practice is covered in the guide to what AI training employees actually need. The short version: oversight staff need to understand how the system generates outputs, what its documented failure modes are, and what escalation looks like when something does not look right.

Obligation Three: Monitor and Report

You must actively monitor the operation of your high-risk AI systems based on the provider's instructions, and act when risks emerge. If risks to health, safety, or fundamental rights arise, notify the provider immediately and stop using the system.

The sequence matters: notify the provider first, then importers and distributors where relevant, then the market surveillance authority. If the provider cannot be reached, the notification goes directly to the authority. This is not a passive obligation. It requires deployers to have a mechanism for identifying when a system is generating outputs that fall outside expected parameters, which in turn requires knowing what normal performance looks like.

Most deployers do not establish a performance baseline before going live. In 2021, Michigan Medicine deployed a sepsis-detection AI without first establishing a local performance baseline. Because they had no precise record of how the system performed on Day 1, they had no way to detect when the AI began missing 67% of sepsis cases — a significant departure from the developer's promised accuracy. The drift happened silently. There was no reference point to trigger an alarm. An external study eventually exposed the failure, by which point the clinical and legal risks had been accumulating undetected for months.

In 2024, Apex Finance launched a credit-scoring AI without recording a baseline of its initial approval rates across different demographics. As the economy shifted over the following eighteen months, the model encountered unfamiliar data distributions and began disproportionately rejecting applicants from specific postcodes. Because Apex had never established what normal performance looked like at go-live, this drift remained undetected by their monitoring tools.

By the time a regulatory audit flagged the bias in 2026, the bank was already facing significant fines for discriminatory lending. Without a Day 1 reference point, monitoring is merely a formality. It does not protect an organisation from the gradual accumulation of liability that follows.

Early self-reporting when something does go wrong is a meaningful mitigating factor in enforcement. The worst position to be in is one where a regulator discovers an incident you identified and chose not to escalate. That is treated differently, and worse, than an organisation that caught a problem and reported it promptly.

Obligation Four: Retain Logs for Six Months

This six-month minimum applies to logs the deployer has access to. The purpose is to ensure that decisions made with AI assistance can be traced, reviewed, and audited after the fact. In employment, credit, or benefits contexts, where affected individuals may challenge decisions weeks or months later, this retention period provides the evidential basis for any review. Without it, you cannot demonstrate compliant oversight and you cannot reconstruct what the system actually did.

Article 26 is specific: logs automatically generated by the AI system must be stored for at least six months, in compliance with applicable data protection law.

The interaction with GDPR data minimisation principles requires careful joint navigation by legal and compliance functions. You need to retain logs long enough to satisfy the AI Act's six-month minimum while not retaining personal data beyond what GDPR permits for the underlying processing purpose. These two obligations do not automatically conflict, but they do require deliberate design rather than default settings.

In 2026, a firm called Metro Logistics deployed a SaaS AI assistant from a provider that kept all system logs on a proprietary, inaccessible server. When a regulatory review required the firm to produce six months of records, Metro Logistics discovered they had no way to export the data and were left entirely dependent on the provider's slow-moving support team.

If you cannot access and export your system's logs, your ability to meet the six-month retention obligation depends entirely on your provider's cooperation. Secure independent log access and export rights in writing before signing a contract. Not after an incident, when you may find you are locked out of your own compliance records.

Obligation Five: Notify Workers and Affected Individuals

Two notification obligations apply to deployers under the Act, and they apply to different audiences. Both use the word "inform." Neither is satisfied by a general privacy policy or a buried terms-of-service clause.

This must happen before go-live. It is a transparency obligation that reflects the Act's concern about AI being used to monitor, evaluate, or make decisions about employees without their knowledge.

The notification must be specific to the AI system and its role: what it does, what it does not do, and what oversight mechanisms are in place. A general announcement that "we are using AI tools" is not sufficient.

In some EU member states, this notification triggers consultation or co-determination procedures under national labour law. Check what applies in each jurisdiction before treating this as a simple tick-box.

A separate obligation under Article 26(11) requires deployers to inform individuals who are subject to AI-assisted decisions about the use of those systems.

If your AI system plays a role in a credit decision, a benefits assessment, a recruitment outcome, or any other consequential determination, the person on the receiving end is entitled to know an AI was involved.

Neither obligation is satisfied by a general privacy policy or a buried terms-of-service clause. The notification must be specific to the AI system and its role in the decision.

Obligation Six: Fundamental Rights Impact Assessment

The Fundamental Rights Impact Assessment is the most distinctive deployer-specific obligation in the Act, and the one most likely to be missed by organisations that have focused their compliance attention on the provider side. It is also the one with the most substantive analytical content. This is not a checkbox exercise.

The FRIA does not apply to all deployers of high-risk AI. It applies to a specific subset: public bodies and private entities providing public services that deploy Annex III systems, and, regardless of whether they are public or private, any deployer using AI for credit scoring or for risk assessment and pricing in life and health insurance. If you fall into one of those categories, the FRIA must be completed before first use and updated if underlying circumstances change.

The assessment must take a preventive and risk-based approach. The objective is to identify, prior to deployment, potential adverse impacts on fundamental rights arising from the use of the high-risk AI system. In practice that means working through three things: the categories of individuals who will be affected and whether any are in positions of particular vulnerability; the specific rights at stake, which almost always include non-discrimination and will vary by context; and the concrete measures you will take to address identified risks. Not vague commitments, but specific and accountable ones.

Once completed, the results must be registered in the EU database and made available to market surveillance authorities on request. If your deployment context changes materially, the assessment should be revisited, not filed away as a completed task.

Public Authorities: Additional Registration Requirement

One obligation applies specifically to public authority deployers, and it sits slightly apart from the Article 26 framework. Under Article 49, public authority deployers must verify that any high-risk AI system they intend to use is registered in the EU database. If it is not registered, they must not use it, and they must inform the provider or distributor of the gap.

This creates a verification step in procurement that sits alongside the provider's obligation to register. Registration is ultimately a provider responsibility, but using an unregistered system is a compliance failure on the deployer's side too. For public sector procurement teams, this means adding database verification to the standard pre-deployment checklist. Confirmation that the system is registered should sit alongside confirmation that the conformity assessment has been completed.

The broader point this illustrates is one that applies to all deployers, not just public authorities: your compliance is partially dependent on your provider having met their obligations. The strongest position is one where you have independently verified the compliance status of any system you deploy, not simply assumed it.

Compliance Checklist for Deployers

For compliance, legal, and operational leads assessing Article 26 readiness before August 2026.

Savia's AI governance and compliance learning paths build the role-specific capability that genuine oversight requires: not awareness training, but the judgment and authority to question, monitor, and override AI systems in practice.