Most AI projects fail not because the technology underperforms, but because the intent behind them was never clearly defined. Organisations enter what might be called experimentation mode: pilots get launched, tools get procured, dashboards get built, and a steady stream of activity gets reported to leadership as progress. Then, twelve months later, someone asks what the return has been? And the honest answer is that nobody defined what return would look like.

While it's always positive to try out new technology, your organisation needs a consistent approach and strategy. It may be true that Amazon was started in a garage, but there was always an idea of how to leave that garage and make it something more. Scattered experiments rarely produce institutional capability. They produce evidence that AI was tried, which is a very different thing.

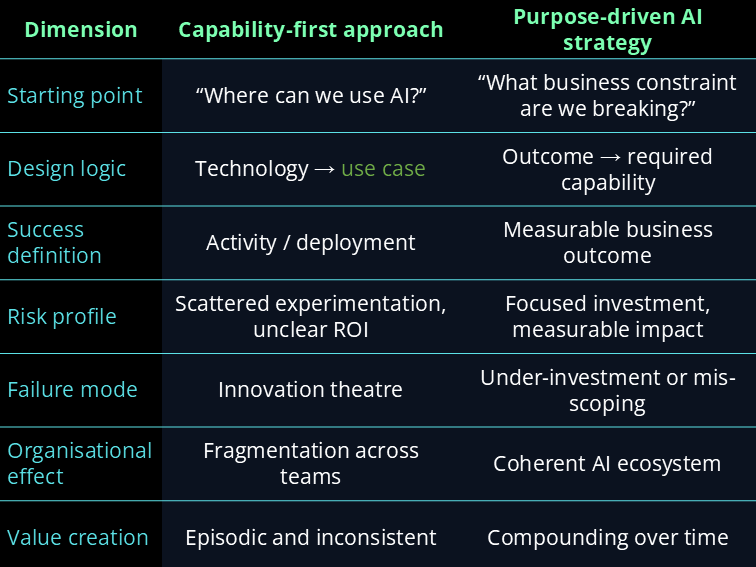

A purpose-driven AI strategy starts from the opposite direction. It defines the business outcome first, then asks what role AI should play in achieving it. The difference in approach sounds straightforward. The difference in results — in the organisations that have applied it — is significant. For more on what AI literacy means as a foundation for this kind of strategic thinking, see our piece on what AI literacy actually is; this article focuses specifically on the strategic layer that determines whether AI efforts build toward something or dissipate into noise.

What Most AI Initiatives Get Wrong First

The most common failure mode in AI strategy is solving for "how" before defining "why." A team identifies a tool, builds a pilot around its capabilities, and then works backwards to justify it with business metrics. The pilot demonstrates that the technology functions. It rarely demonstrates that the technology was the right answer to the right question.

The costs of this approach are familiar to anyone who has worked inside a large organisation during an AI push. Compute resources get consumed by initiatives with no clear success criteria. Talented people spend months building systems that get deprioritised when leadership attention shifts. And a creeping scepticism develops among the teams closest to the work — the sense that AI is being deployed for the appearance of innovation rather than for any specific operational gain. Call it innovation theatre: the performance of transformation without its substance.

The consequences are not always internal. In May 2024, Google's AI Overview feature — a high-profile, consumer-facing initiative designed to surface AI-generated summaries at the top of search results — began suggesting users eat rocks and add glue to pizza sauce, having surfaced content from satire sites and forum jokes as factual information. The initiative had clearly prioritised fluency over factuality: the outputs sounded confident and well-structured, which made the errors harder to detect and more damaging when they were. Google moved quickly to address specific cases, but the episode illustrated what happens when a capability is deployed at scale before the question "what should this actually be for, and what does failure look like?" has been answered clearly enough to build safeguards around.

This pattern is not limited to content accuracy. As AI systems become more capable, they also expand the attack surface for misuse. For example, advances in AI-driven password cracking highlight how quickly security assumptions can become outdated when AI capability accelerates faster than defensive design.

The financial cost of failed AI pilots is significant but recoverable. The cultural cost is harder to repair. Employees who watch AI initiatives launched with fanfare and quietly abandoned develop a rational scepticism about the next initiative — which makes genuine transformation harder to achieve, not easier. Purpose-driven strategy is not just more efficient. It is the prerequisite for organisational trust in AI.

What Is a Purpose-Driven AI Strategy?

The shift this requires is not primarily technical. It is a shift in the questions that govern how AI investments get made.

Purpose in Action: Two Organisations That Got This Right

The clearest way to understand purpose-driven AI strategy is to look at what it produces. Both of the following organisations defined a specific, high-stakes business constraint before selecting their AI approach — and both achieved outcomes that would not have been possible with a capability-first strategy.

Moderna's AI strategy was not built around a general aspiration to "use AI in drug development." It was built around a specific operational constraint: the time required to move from sequence design to clinical batch production was the primary bottleneck in their pipeline, and it was measured in years.

By anchoring AI development to that specific constraint — and measuring every initiative against its impact on that timeline — Moderna was able to reduce clinical batch production from years to weeks during the COVID-19 vaccine development. The AI was not a feature of their scientific workflow. It was the mechanism through which the constraint was broken.

Intercom did not build their AI assistant Fin to "improve customer support." Their defined purpose was autonomous resolution of customer issues — not assistance, not more efficient escalation, but complete resolution — measured by a reduction in support overhead for clients. That specificity shaped every product decision. Fin was built to resolve queries independently on first attempt, not to route them more smoothly to humans. The constraint was defined as the percentage of queries requiring human involvement, and the goal was to reduce it materially. Many Intercom clients report support overhead reductions of around 50% — an outcome that required precise intent, not general ambition.

What both cases share is not exceptional technology. They share a discipline of definition — the practice of specifying what the AI is for clearly enough that the organisation could tell, at any point during development, whether it was moving toward the goal or away from it. That discipline is what purpose-driven strategy produces.

Purpose as a Filter, Not Just a Goal

One of the most practically useful properties of a well-defined AI purpose is that it functions as a filter for saying no. In most organisations, the supply of AI ideas exceeds the capacity to execute them by a significant margin. Without a filter, the default decision-making process involves whoever argues most convincingly, whichever team has the most political capital, or whichever proposal arrived most recently. None of these criteria reliably identify high-value initiatives.

A clear organisational AI purpose changes this. If the stated purpose is reducing time-to-decision in the underwriting process, then a proposal for AI-assisted marketing copy generation — however well-designed — is a distraction from that purpose. It may be worth pursuing eventually, but it is not the priority now. Purpose gives you a principled basis for sequencing that does not depend on politics or persuasion.

Amazon's approach to AI prioritisation illustrates this clearly — though not without complication. Teams are expected to work backwards from a specific customer or operational problem, a practice embedded in their document culture. When a proposed AI initiative cannot be anchored to a measurable outcome, it does not move forward regardless of the technical interest involved. A Guardian investigation into Amazon's AI deployment found that this disciplined, outcome-first approach has produced genuine efficiency gains in logistics and operations — while also revealing tensions where the same purposeful rigour has been applied to workforce monitoring in ways that raise legitimate human and ethical questions. The lesson is not that purpose-driven strategy is without risk, but that it forces those risks into the open where they can be examined, rather than allowing them to emerge quietly from unexamined pilots. A clear purpose is a better starting point for that conversation than the absence of one.

This filter function also creates organisational coherence across departments that might otherwise build disconnected AI ecosystems. When Sales, Product, and Operations each understand the same central AI purpose, their separate initiatives can be designed to reinforce each other rather than duplicate effort or create conflicting data structures. A shared purpose is the architecture of a unified AI ecosystem — not a technology decision, but a strategic one.

Building Your Purpose Framework

Defining AI purpose is not the same as writing a mission statement. It requires answering four specific questions before any implementation work begins. These four elements together constitute a purpose framework: the minimum specification that distinguishes a focused initiative from an unfocused one.

An initiative that cannot be described through all four elements is not ready to begin. This is not a bureaucratic hurdle — it is a quality check that prevents the most common causes of AI project failure from taking root before work starts.

Leadership as the Strategic Signal

The ultimate bottleneck in AI strategy is rarely the technology. It is the clarity of the leadership signal. When organisational direction is ambiguous, teams optimise for local wins — whatever makes their function look good in the next review cycle. Individual pilots may succeed on their own terms while the organisation as a whole fails to build compounding capability.

Purpose-driven AI strategy requires leaders to do something that is harder than approving a technology budget: it requires them to define, communicate, and consistently reinforce a direction. That means being willing to say no to initiatives that fall outside the stated purpose, even when those initiatives come with compelling pilots. It means measuring AI investment against business outcomes rather than AI activity. And it means modelling the kind of disciplined, outcome-focused thinking that the strategy requires from everyone else.

The connection between strategic clarity and team performance is explored in more depth in our piece on AI leadership — specifically the challenge of maintaining focus and motivation across teams navigating complex, long-horizon AI transitions. Leaders who cannot articulate why an AI initiative matters to the business will struggle to sustain the team through the points where it is difficult.

AI as a Force Multiplier

AI is a force multiplier for your existing direction. If that direction is clear, the technology accelerates growth. If it is unclear, the technology scales confusion — faster, at greater expense, and with more professional credibility attached to the confusion than it would have had before AI was involved.

Organisations that lead with purpose arrive at institutional AI capability faster and with less wasted motion than those that lead with tools. Not because they move more slowly or more cautiously, but because each initiative builds on the last. A clearly defined first initiative creates infrastructure, data, and organisational learning that makes the second initiative faster. The second creates conditions for the third. Over time, the organisation builds something that scattered pilots never produce: a genuine, defensible AI capability that is integrated into how the business operates rather than adjacent to it.

A clear AI strategy requires people who can execute it — teams that understand how to evaluate AI outputs, design human-in-the-loop workflows, and maintain accountability as the tools evolve. Our AI literacy courses develop that foundation.

Explore AI Literacy Courses →