Most organisations assume AI adoption fails because of tools or use cases. In practice, the most common barrier is simpler and harder to fix: people do not yet know how to use AI effectively in their daily work. The tools get rolled out. The workshops get scheduled. And then most employees return to their desks and continue working exactly as they did before, with a new tab open that they consult occasionally when nothing else is working.

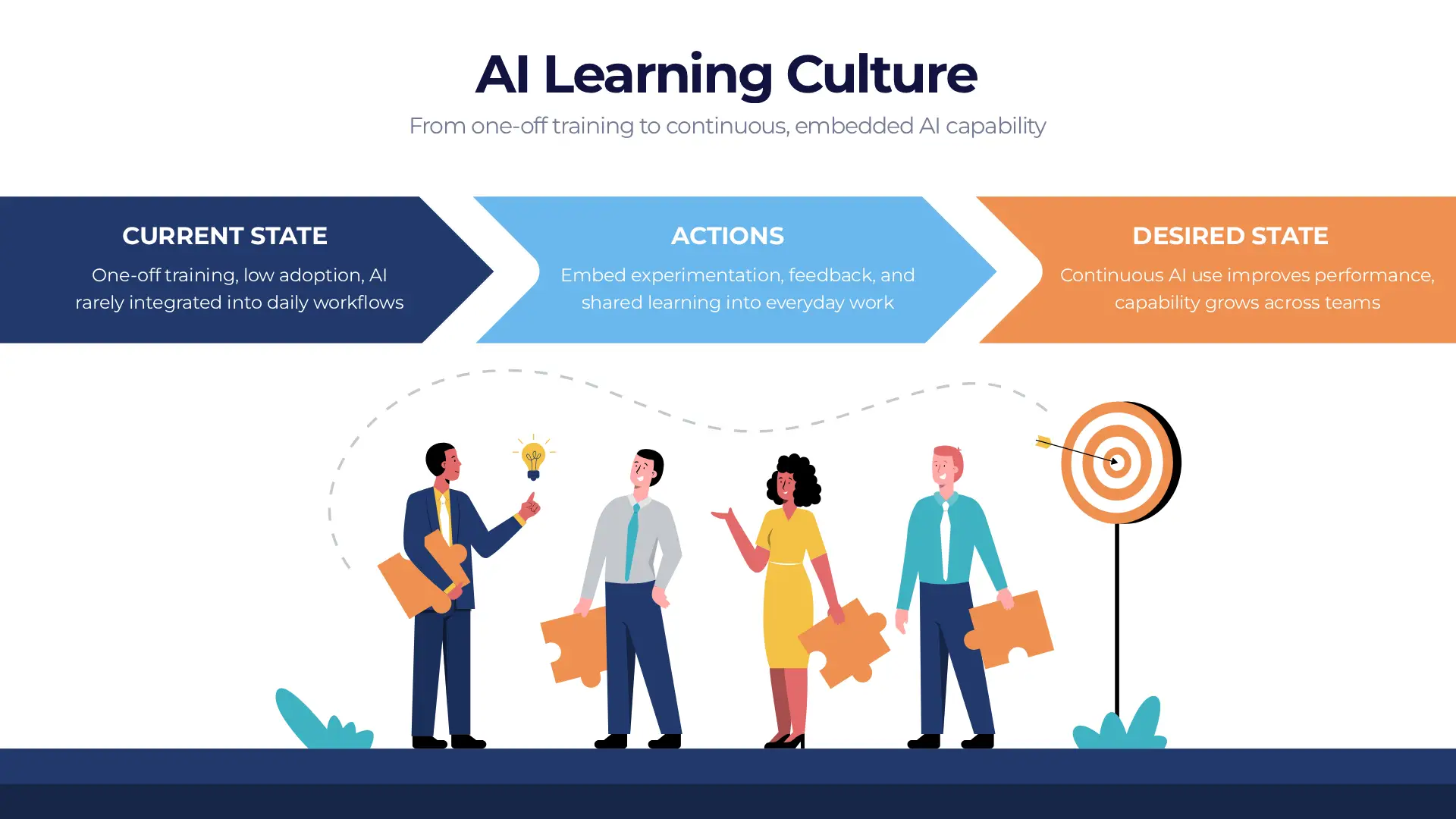

This is not a technology problem. It is a learning culture problem. And it will not be solved by another AI awareness session. We cover the governance side of AI adoption elsewhere. This article focuses on the human and organisational side: what an AI learning culture actually means, what it looks like in practice across real organisations, and what gets in the way of building one.

Why One-Off Training Is Not Enough

The standard organisational response to AI adoption involves a sequence of events that has become familiar: an AI workshop, a prompt engineering session, a tool demo from the vendor, a follow-up email with resources nobody reads. These interventions raise awareness. They do not build capability.

Learning that is disconnected from actual work decays quickly. An employee who attends an AI training session and then returns to a role with no structured opportunity to experiment, no feedback on what they produce, and no colleagues sharing what they have learned will revert to familiar habits within weeks. The knowledge fades not because the employee was inattentive but because learning requires repetition, application, and feedback — none of which a workshop delivers.

One-off training creates awareness. Continuous practice creates capability. These are not different points on the same spectrum — they are fundamentally different outcomes. Awareness means an employee knows what AI tools exist. Capability means they know when to use them, how to evaluate what they produce, and how to improve their approach over time.

What an AI Learning Culture Actually Means

What This Looks Like In Practice

The clearest way to understand an AI learning culture is to look at organisations that have built one. The examples below are drawn from across sectors and use cases, with one thing in common: AI is embedded in how work gets done, not bolted on as a separate tool employees access when they remember it exists.

Across these examples, the pattern is consistent with what we explored in our piece on where AI actually creates value: the organisations seeing real results are those where AI is integrated into existing workflows, human judgment remains in place, and the act of using AI is itself the mechanism through which people get better at using it.

Common Pitfalls That Stall the Culture

Understanding what an AI learning culture looks like makes it easier to see what undermines one. Four failure patterns appear consistently across organisations where AI adoption has stalled or fragmented.

When AI use is discretionary, adoption becomes a personality trait rather than a professional standard. The enthusiastic early adopters use it extensively. Most people do not. The result is knowledge silos rather than shared capability — and the gap between early adopters and everyone else widens over time rather than closing.

Concentrating AI capability in a small group of enthusiastic users creates a bottleneck and a single point of failure. When those individuals leave, the organisational knowledge they developed leaves with them. Building an AI learning culture requires deliberately distributing capability across teams, not allowing it to concentrate in a handful of champions.

Organisations that invest heavily in AI tool procurement and lightly in the judgment and workflow design required to use those tools well consistently underperform organisations with the opposite balance. The tool is the least difficult part of the problem. Knowing when to use it, how to evaluate its outputs, and how to integrate it without losing accountability — these take deliberate development.

AI literacy does not develop through passive instruction. It develops through trial, failure, reflection, and iteration — which requires protected time. Organisations that expect AI capability to develop alongside full workloads, without any dedicated space for experimentation, get superficial adoption and shallow learning.

The Role of Leadership

AI learning cultures are cultivated, not emergent. They do not develop spontaneously when tools are made available. They develop when leadership creates the conditions for them — and that requires something more than endorsing AI in an all-hands presentation.

Leaders who build AI learning cultures do three things consistently. They model experimentation themselves, demonstrating that trying new approaches and being open about what works and what does not is a professional norm rather than a vulnerability. They provide time and resources for learning that is protected from the immediate pressure of delivery. And they measure outcomes — speed, quality, error rates, capability growth — rather than just outputs, which creates the feedback loop that makes learning visible and valued.

This connects directly to the leadership challenges we explore in more depth here: the human and motivational dimensions of leading a team through AI adoption are as important as any technical decision about which tools to use.

Practical Steps to Build the Culture

Building an AI learning culture does not require a large programme or a long runway. The organisations that have done it most effectively have started small and iterated — which is, perhaps fittingly, exactly the approach they are trying to instil.

Start with real, high-value use cases. Abstract AI experimentation produces abstract learning. When employees apply AI to a task they actually care about — something that takes them too long, frustrates them, or blocks something else — the feedback is immediate and the motivation to improve is genuine.

Encourage small, low-risk experiments with visible results. The first experiments should be chosen specifically because failure is cheap. A bad AI-generated first draft of an internal document is a learning experience. A bad AI-generated client proposal is a problem. Start where the cost of error is low and the frequency of the task is high.

Make learning visible and shareable. When someone finds a prompt pattern that works, a failure mode worth knowing about, or a workflow integration that saves meaningful time, that knowledge should go somewhere others can find it — a shared library, a team wiki, a regular five-minute knowledge share in a standing meeting. The learning that stays in one person's head does not scale.

Build simple frameworks for when and how to use AI. Employees do not need comprehensive AI policies to get started — they need enough structure to feel confident making decisions. A simple guide covering which tools are approved, what should not be fed into external models, and where human review is required gives people enough to move forward without waiting for complete governance documentation.

Our AI literacy courses are designed to develop practical, role-relevant capability — not just awareness. If your organisation is ready to move from AI adoption to AI proficiency, we can help build the foundation.

Explore AI Literacy Courses →