Most organisations can now say they have done something on AI training. A lunchtime session. An e-learning module. A vendor-led demo. The box is ticked. The problem is that ticking the box and closing the skills gap are not the same thing.

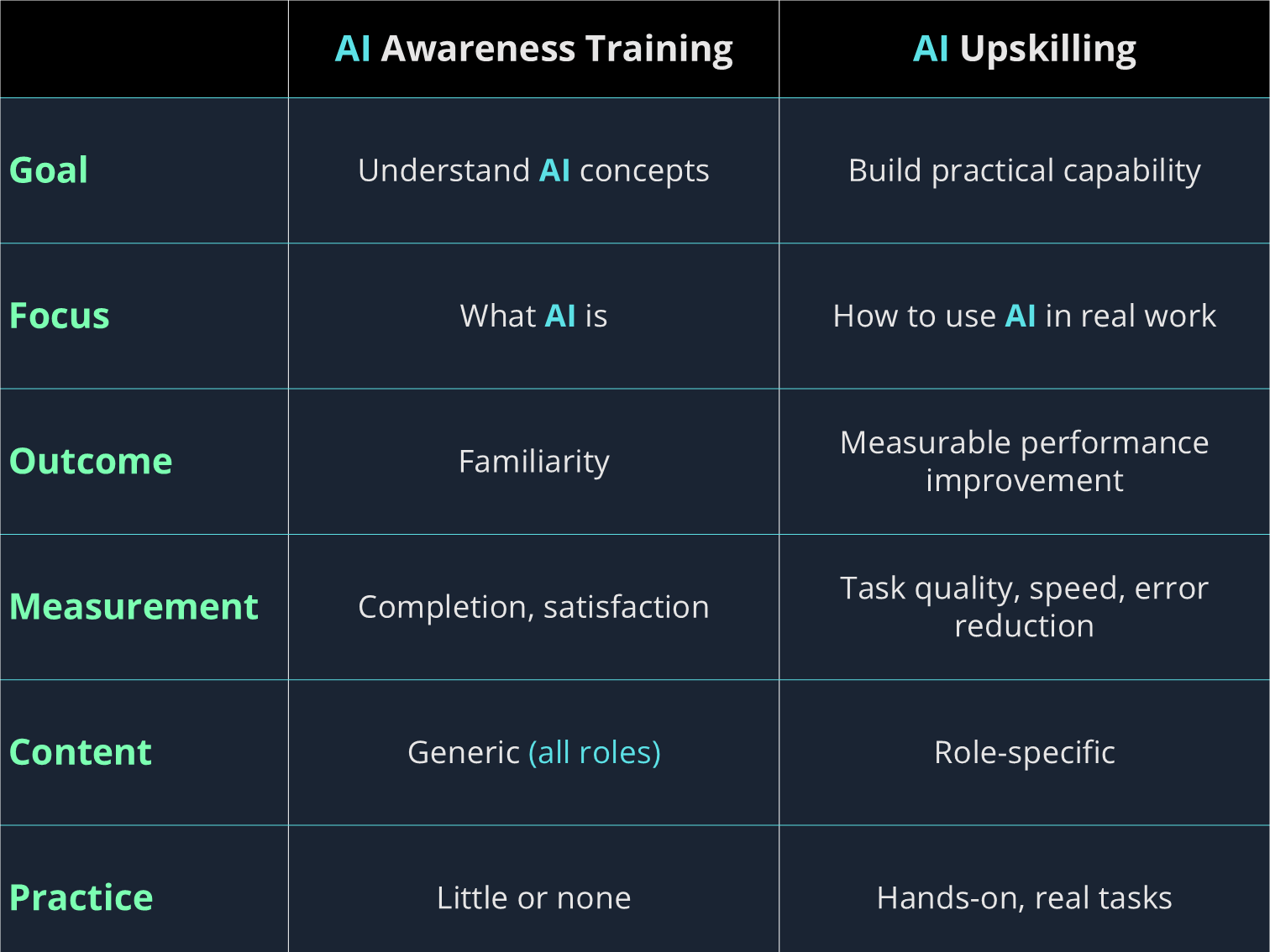

AI awareness training and AI upskilling are often used interchangeably. They are not the same thing, and treating them as equivalent is one of the most common reasons AI training programs fail to produce measurable change. Awareness training tells employees that AI exists, what it broadly can do, and why it matters. Upskilling changes what employees can actually do — with specific tools, in specific workflows, producing outputs that are measurably better than before.

The distinction matters because most organisations need upskilling but are only funding awareness. McKinsey finds nearly half of employees want more formal training despite broad familiarity with AI tools. Familiarity is not the same as capability. This article explains the difference, why it matters for ROI, and how to know which one your current program is actually delivering. For how to connect that to measurement, see how to measure the ROI of AI training.

What AI Awareness Training Actually Is

AI awareness training is designed to reduce unfamiliarity. Its primary goal is to make AI feel less foreign: to give employees a mental model for what AI is, what it can broadly do, and why the organisation is investing in it. Done well, awareness training is genuinely useful. It reduces fear, creates a shared vocabulary, and lays the conceptual groundwork for more advanced learning. It is also fast to produce, easy to scale, and simple to measure by completion rate.

The problem is not that awareness training exists. The problem is that it is frequently where the training investment ends.

Consider the scale of the gap this creates. Only 15% of US employees say their workplace has communicated a clear AI strategy. Yet 78% of organisations reported using AI in their operations in 2024, up from 55% just a year before. Most employees are being told AI is happening without being told what to do with it.

Awareness training leaves employees knowing more about AI without being able to do more with it. They can describe what a large language model is. They cannot reliably produce a useful output from one. That gap — between knowing and doing — is exactly what upskilling is designed to close.

What AI Upskilling Actually Is

Upskilling is applied capability development. It starts with a specific role, a specific workflow, and a specific skill gap — and it finishes when the employee can perform a task they could not perform before, or perform it to a reliably higher standard.

The distinction is practical, not academic. Awareness training might teach a marketing manager that AI can help with content creation, a finance analyst that it can support modelling, or an educator that it can assist with structured lesson design using AI-powered teaching tools. Upskilling would teach that same marketing manager how to write a brief that produces usable first drafts, how to review AI output for brand fit and factual accuracy, and when not to use AI for a given content type because the risk of error outweighs the time saving.

JPMorgan Chase is an instructive example of what this looks like at scale. Rather than issuing an all-staff awareness briefing, new analyst hires in the bank's Asset and Wealth Management group now receive mandatory training in prompt engineering, specifically designed to ensure they can leverage generative AI tools effectively in their daily workflows. That is not awareness. It is role-specific, tool-specific applied practice. Employees using JPMorgan's LLM Suite report 30 to 40% efficiency gains, with AI benefits growing at a similar rate each year.

Upskilling is harder to design, slower to deliver, and more expensive per learner. It also requires knowing what good performance looks like before and after training, which means it forces organisations to be specific about what they are trying to change. That specificity is not a burden. It is what makes upskilling programs measurable, and measurable programs justifiable. Building practical AI training programs covers what that design process actually looks like.

Why the Distinction Matters for Your Skills Gap

The AI skills gap most organisations report is not an awareness gap. Employees broadly know that AI tools exist. They have heard about ChatGPT. Many have experimented with it personally. The gap is in applied fluency: the ability to use AI tools consistently, appropriately, and to a professional standard within a specific role.

That mismatch is the problem in compressed form. Organisations that respond to a fluency gap with awareness training are not closing the skills gap. They are documenting it. Completion certificates accumulate. Capability does not.

This matters most in contexts where AI output directly affects quality, risk, or customer experience. A BetterUp and Stanford University survey of 1,150 US desk workers found that 40% reported receiving AI-generated output that masquerades as productivity but falls short of human-produced work and can take hours to fix. That is the downstream cost of confidence without competence. An employee who has completed an AI awareness module but has no practised judgment about when AI output is unreliable is not safer than an untrained employee. They may be less cautious.

The ROI Gap Between the Two

The financial case for distinguishing awareness from upskilling is straightforward, and the data behind it is consistent.

A 2025 EY survey of 500 senior US decision-makers found that 96% of organisations investing in AI are experiencing some level of AI-driven productivity gains. Among those who have seen positive ROI, 56% report significant, measurable improvements in overall financial performance. The critical variable is not the AI tool. It is whether employees know how to use it.

McKinsey research shows leading AI adopters outperform competitors by two to six times in total shareholder returns. The gap between those companies and their competitors is not access to AI. It is the capability of the people using it.

There is also a secondary cost to awareness-only training that rarely appears in the ROI calculation: the cost of misuse. Walmart's experience is instructive. The company has reskilled over 100,000 employees through its internal upskilling program, enabling it to fill talent gaps without relying on external hires and routing 5.5 million training hours annually through structured programs that now include AI-specific curricula. The alternative, deploying AI tools to employees who have been told they are useful but not trained in how to use them responsibly, produces more errors, more rework, and more review overhead than not training at all.

Two organisations deploy the same AI writing assistant to their content teams. Organisation A runs a one-hour awareness session. Organisation B runs a four-week upskilling program covering brief-writing, output review, fact-checking protocol, and brand verification. At six months, Organisation A's team is producing roughly the same volume of content with marginally faster first drafts that require the same revision time as before. Organisation B's team has reduced revision cycles by 40% and expanded output volume without headcount growth.

Same tool. Different training investment. The ROI differential is not driven by the software licence. It is driven by the capability of the people using it.

How to Tell Which One Your Current Program Is Delivering

Most organisations believe their AI training sits closer to the upskilling end of the spectrum than it actually does. These four questions are a more reliable test.

After training, can employees do something specific they could not do before? If the honest answer is "they understand AI better," that is awareness. If the answer names a concrete task or workflow, that is upskilling.

Is the training mapped to specific roles? Generic AI literacy content delivered to all employees at once is awareness, regardless of how it is labelled. McKinsey's analysis of high-performing AI adopters identifies role-based capability training as one of the defining features of organisations that successfully embed AI into business processes. Upskilling is role-specific by design: what a finance analyst needs to do better is not what a customer support agent needs to do better.

How are you measuring success? Completion rate and satisfaction scores measure awareness delivery. Behaviour change, output quality, and task performance measure upskilling outcomes. Mentoring-based AI upskilling allows organisations to track not just completion rates but actual improvements in work quality, productivity gains, and reductions in AI-related errors. If your only measurement is whether employees finished the module, you are running an awareness program.

Does the training include practice with real tools on real tasks? Watched and read content builds awareness. Supervised practice on actual workflows builds skill. This is the most reliable single differentiator between the two: if employees leave the training having only consumed content rather than applied it, the program is awareness.

Building a Program That Does Both, in the Right Sequence

Awareness and upskilling are not competing investments. They are sequential ones. The mistake is not running awareness training. It is stopping there.

A well-structured AI training program uses awareness as a foundation, then builds role-specific upskilling on top of it. The sequence matters. Role-specific upskilling delivered to employees with no conceptual grounding produces confusion. Awareness training delivered without a clear pathway to applied practice produces completion rates and little else.

McKinsey describes this as the difference between treating upskilling as a training rollout versus treating it as a change management effort. Organisations that approach it as a holistic change journey can accelerate adoption, unlock innovation, and build trust with their workforce simultaneously.

Microsoft's Elevate initiative reflects this sequencing in practice. Launched in 2025, Microsoft Elevate is a $4 billion AI skilling initiative with an Elevate Academy designed to credential 20 million people in AI within two years, collaborating with LinkedIn Learning, GitHub, and Code.org to deliver training ranging from AI basics for non-technical learners through to advanced machine learning courses. The architecture is deliberate: foundation first, applied capability on top.

The practical implication for L&D teams is that program design should start at the upskilling end: what specific capability do we need employees to have, in which roles, by when — and then work backwards to identify what awareness content is genuinely prerequisite. Most programs are designed the other way around, which is why most programs stop at awareness. Building an AI learning culture covers what it looks like when organisations get this sequence right.

What Role-Specific Upskilling Looks Like in Practice

The most useful way to make the distinction concrete is through examples, because the gap between awareness and upskilling looks different depending on the role. The same principle applies across every function: awareness produces understanding; upskilling produces auditable behaviour change.

Knows AI systems carry regulatory risk. Can describe the EU AI Act in general terms. Understands that AI tools may require governance.

Can conduct a preliminary assessment of whether a given tool falls within Annex III high-risk categories. Knows what documentation to request from a vendor. Understands the deployer obligations that apply when the organisation uses AI in consequential decisions. Knows when to escalate to legal.

Knows AI can assist in recruitment. Understands that AI bias is a concern. Has general familiarity with AI in HR contexts.

Has practised reviewing AI-generated candidate summaries for bias markers. Understands the worker notification obligations when AI is used in hiring. Can identify when a tool is being used outside its intended scope and knows how to intervene.

Knows AI can help with content production. Has heard about prompt engineering. Understands AI can speed up drafting.

Writes briefs that consistently produce usable first drafts. Reviews AI output for brand fit, factual accuracy, and tone. Knows which content types AI handles poorly and when human drafting is faster and safer.

Knows AI tools exist in financial analysis. Has general awareness of AI in forecasting and modelling contexts.

Uses AI tools to accelerate data processing while applying consistent verification steps for model assumptions. Can communicate uncertainty in AI-assisted forecasts. Knows which outputs require human sign-off before reaching decision-makers.

Employees with practical AI skills are substantially more likely to transition successfully into new roles created by automation than those with AI literacy alone. That is the difference between knowing and doing, and the difference that shows up in outcomes when the capability is actually tested.

Savia's learning paths are built around role-specific applied practice, not generic AI literacy modules, because completion rates are not the outcome your organisation needs.