Most L&D teams working on AI training are asking the right questions about content: what does this role need to know, what tools are we using, what does good output verification look like? Far fewer are asking the question that determines whether any of that content actually changes behaviour.

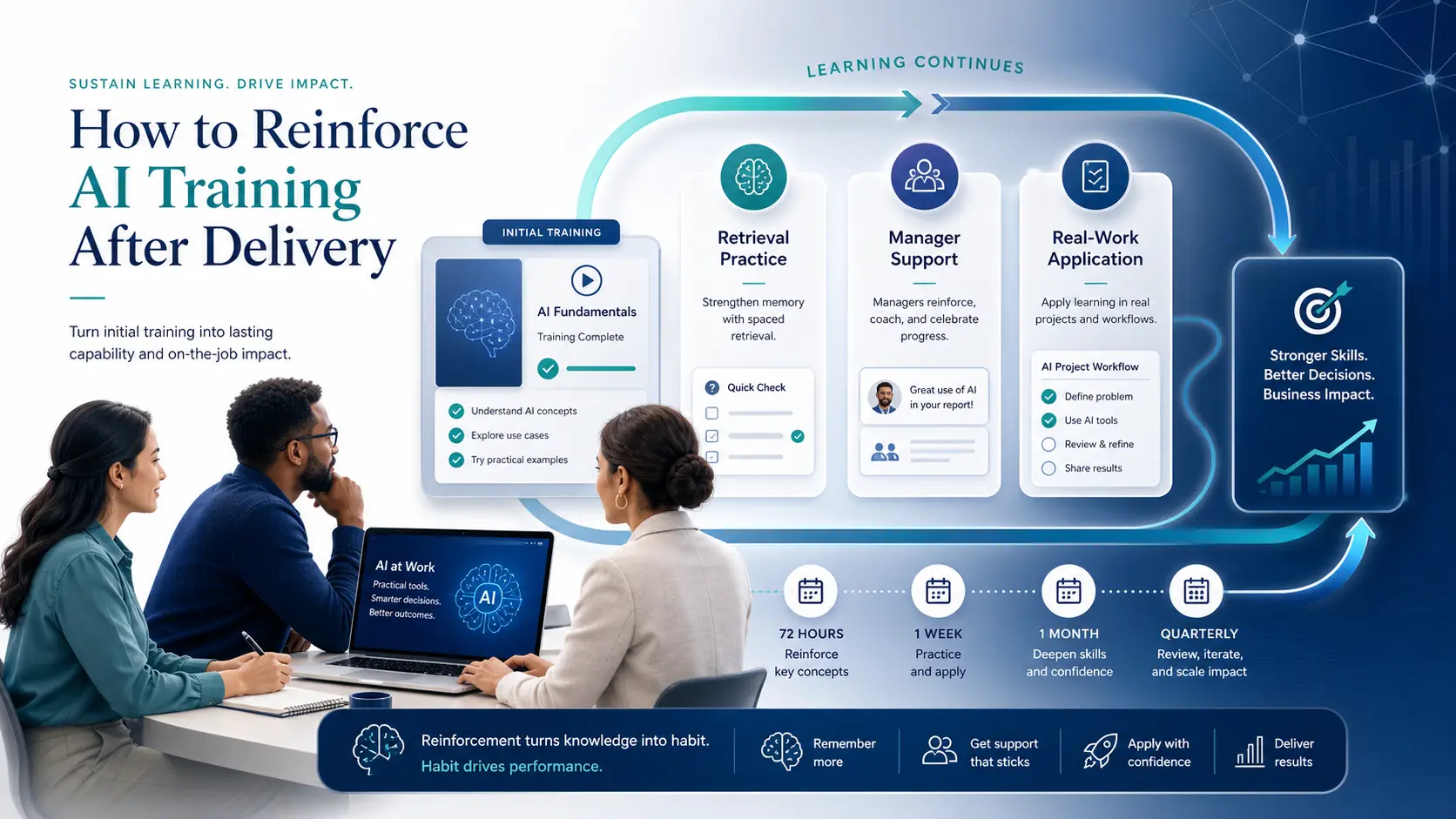

So, what exactly should they be doing? To reinforce AI training after delivery, combine spaced retrieval practice, manager follow-up, real-work application, and regular content reviews via microlearnings. Start within 24 to 72 hours of the training session, then use weekly, monthly, and quarterly touchpoints to keep skills current and turn one-time learning into workplace behaviour.

Only 12% of learners apply new skills without structured follow-up after training. That means roughly 88 cents of every training dollar produces no behaviour change unless a reinforcement structure exists alongside it. That's not a marginal loss. It's most of the budget.

For AI training specifically, the gap isn't trivial. Employees who complete AI training but don't change their behaviour are still pasting sensitive data into unapproved tools, still accepting AI-generated outputs without review, still missing the escalation pathways they were shown in a module they've since forgotten. So it's fair to wonder, what's the point of the training if the risk remains?

This article covers what reinforcement for AI training actually requires — the evidence behind it, the specific mechanisms that work, the manager's role, and how to build the reinforcement structure before the first session is delivered. It builds on the broader programme design covered in measuring the ROI of training.

Why AI Training Fades Faster Than Most: and Why That Matters

The forgetting curve applies to all workplace learning (and nearly all learning, just in general). AI training faces two compounding factors that make reinforcement more urgent than for almost any other content your teams are being trained on.

Workers forget without reinforcement up to 70% of new information within a day and up to 90% within a week. After one month, 70 to 90% of content is gone without spaced review. Those are the baseline figures for meaningful workplace content delivered well. AI training faces an additional pressure on top of this: the tools and workflows it covers change faster than almost any other domain employees are trained in.

Think about an employee who completed AI training six months ago and hasn't revisited it since. They've very likely encountered new embedded AI features in tools they use daily, new regulatory obligations their organisation is subject to, and new failure modes in AI outputs their role produces. None of those were covered in the original session. The training isn't just forgotten. It's also outdated.

The second compounding factor is the nature of the behaviour change AI training is trying to produce. Changing how employees handle data, verify outputs, and escalate concerns requires habitual change, not one-time awareness. Spaced repetition improves retention by around 200%. When combined with active application, the improvement compounds to roughly 300% better retention compared to traditional approaches. The implication is direct: single-session delivery cannot produce the habitual behaviour change AI training requires. It never could. The cost of treating AI training as a one-off event is covered in AI adoption without training.

The Reinforcement Timeline: What Needs to Happen and When

Reinforcement isn't what happens after training is forgotten. It's what prevents forgetting in the first place. And it needs to be designed before delivery — not scheduled afterwards as an afterthought.

The ideal reinforcement window is within 24 to 72 hours of the initial training. This timing interrupts the forgetting curve at its steepest point and strengthens memory before decay sets in. From there, spacing out refreshers — first weekly, then monthly — solidifies long-term retention without overwhelming the learner. The principle is simple: repeat at intervals just before knowledge begins to fade.

For AI training, a practical reinforcement schedule built around that principle looks like this.

The Three Mechanisms That Actually Work

Not all reinforcement is equally effective. Three mechanisms have the strongest evidence base for producing durable behaviour change in workplace contexts. None of them are difficult to implement. All of them are routinely skipped.

A team challenge in the first month after training where each member brings one example of an AI output they verified and what they found. A shared log where employees record AI tools they encountered that week and whether each was on the approved list. A brief post-incident review when an AI-related error occurred in the team's actual work.

None of these require additional budget. They require a manager who has been briefed and a team that has been given permission to surface what is actually happening. The framework that makes this oversight habit stick across the team is in building AI oversight.

The barriers to learning transfer aren't mysterious. 50% of employees say their managers lack the support to help them apply new skills. 45% cite lack of personal support in doing so. Manager briefing before AI training is delivered, not after, is the single highest-leverage intervention available. It costs nothing beyond a fifteen-minute conversation per session.

What Reinforcement Must Cover That Initial Training Did Not

Reinforcement for AI training carries a content obligation that doesn't apply to most other training topics. The content itself must be updated, not just repeated.

The AI tools employees use in 2026 aren't the same as those covered in training delivered six months ago. Embedded AI features appear in new applications without announcement. Regulatory obligations shift: the EU AI Act's high-risk enforcement began August 2026, and state-level HR and privacy laws came into force at various points through the year. Shadow AI categories expand as new tools reach consumer availability faster than governance frameworks can track them. The same update obligation applies to data protection training. See GDPR in 2026: what has changed for the parallel pattern.

Reinforcement content that simply repeats the original training without reflecting these changes produces a specific kind of harm: it creates a false sense of currency. Employees leave the reinforcement session believing their knowledge is current when the most important developments haven't been addressed. New shadow AI risks that need covering in reinforcement cycles are detailed in what is shadow AI.

The practical implication is that reinforcement cycles need a content review step, not just a scheduling step. Someone in L&D or compliance needs to own the question of what has changed since the last session and what needs to be reflected in the next reinforcement touchpoint. That's not a large job. But it's a job that needs to be assigned before the reinforcement calendar is built — not discovered missing six months in.

The Reinforcement Design Checklist: Build It Before Delivery

Reinforcement that's designed after training has been delivered is harder to execute, less effective, and more likely to be deprioritised when operational pressure hits. The reinforcement structure should be part of the training design process, produced alongside the session content rather than added afterwards.

Before any AI training module is delivered, L&D teams should be able to answer six questions. If any of them don't have a clear answer, the reinforcement structure isn't ready — which means the training isn't ready either.

Where does this fit alongside the rest of your AI training architecture? Diagnosing the starting point that reinforcement needs to build from is covered in assessing AI learning gaps, and the broader measurement framework that sits alongside reinforcement design is in measuring the ROI of AI training.

The Common Mistakes: What Most Reinforcement Gets Wrong

Three patterns account for most reinforcement failures in AI training programmes. None of them are subtle. All of them are common.

Treating reminders as reinforcement. An email with bullet points summarising the training session isn't reinforcement. It's re-exposure. It doesn't require retrieval, doesn't produce the cognitive effort that creates durable memory, and will be forgotten at the same rate as the original training. Reinforcement requires active application. Re-reading does not qualify.

Scheduling reinforcement after forgetting has already occurred. The ideal first reinforcement window is 24 to 72 hours after training. Skills decay 20% per week without practice. A reinforcement session scheduled three weeks after delivery isn't interrupting forgetting. It's attempting to recover from it — a less efficient use of the same resource.

Making reinforcement optional. When reinforcement activities are positioned as optional resources rather than structured touchpoints with manager involvement and team accountability, completion rates collapse. The employees who most need reinforcement are consistently the least likely to engage with it voluntarily. Optional reinforcement is not a programme. It's a library.

Savia's AI learning programmes are designed from the first session with reinforcement built in: spaced retrieval practice, manager briefing packs, applied team exercises, and quarterly content review cycles that keep what employees learn current as the landscape changes.